Comments (4)

@flashcp I think you're misunderstanding the 'b_k'.

In this paper, b_k is belief mass and b_k = e_k / S = (\alpha_k - 1) / S. However, the category probability p_k=\alpha_k / S.

For the Master Yoda example, the evidence e_k = 0 for each of K categories so that b_k=0 and \alpha_k = 1. Therefore, the uncertainty u = 1 - sum_k(b_k) = 1 and the class prob p_k = 1 / K.

from pytorch-classification-uncertainty.

Hi, everyone.

I applied this method to mobilenetv2.

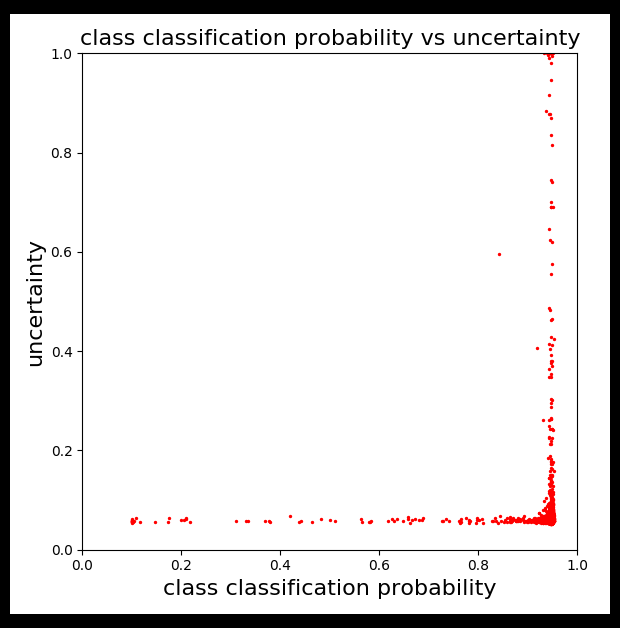

Probability is not bad but uncertainty is not good.

As @Cogito2012 said, u + sum_k(b_k) could be close to 1.0.

I understand probability and uncertainly are inverse proportional.

However, result was not.

This image is generated from mnist validation dataset.

Metrics

accuracy: 0.9936

precision: 0.9936327472490634

recall: 0.9936

f1: 0.9936039409292189

precision recall f1-score support

0 0.97898 0.99796 0.98838 980

1 0.99648 0.99736 0.99692 1135

2 0.99612 0.99612 0.99612 1032

3 0.99604 0.99505 0.99554 1010

4 0.99287 0.99287 0.99287 982

5 0.99551 0.99439 0.99495 892

6 0.99895 0.98956 0.99423 958

7 0.99220 0.99027 0.99124 1028

8 0.99487 0.99487 0.99487 974

9 0.99401 0.98712 0.99055 1009

accuracy 0.99360 10000

macro avg 0.99360 0.99356 0.99357 10000

weighted avg 0.99363 0.99360 0.99360 10000

I can not see scatter graph about distribution of probability and uncertainty from Murat Sensoy's original paper.

Is there someone who reproduced like the above result?

Thanks a lot.

from pytorch-classification-uncertainty.

@takuya-takeuchi From your figure, it seems that you ploted the uncertainty (u) w.r.t. the maximum probability of all classes (e.g., max{p_1, p_2, ..., p_K}, because most of the dots are close to p=1.0. However, according to the equations in this EDL paper, I don't think there will be a relationship between u and max{p_1, p_2, ..., p_K}.

from pytorch-classification-uncertainty.

@flashcp I think you're misunderstanding the 'b_k'.

In this paper,

b_kis belief mass andb_k = e_k / S = (\alpha_k - 1) / S. However, the category probabilityp_k=\alpha_k / S.For the Master Yoda example, the evidence

e_k = 0for each ofKcategories so thatb_k=0and\alpha_k = 1. Therefore, the uncertaintyu = 1 - sum_k(b_k) = 1and the class probp_k = 1 / K.

you are right, thanks for you reply

from pytorch-classification-uncertainty.

Related Issues (9)

- errors when training with different num_classes HOT 1

- why + torch.lgamma(ones).sum(dim=1, keepdim=True) in kl_divergence?

- Proof for loss function

- function one_hot_embedding maybe lack `.to(device)`

- KL Divergence HOT 5

- About annealing_step

- Inappropriate loss function

- Fail to train in mini-Imagenet HOT 5

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from pytorch-classification-uncertainty.