Comments (2)

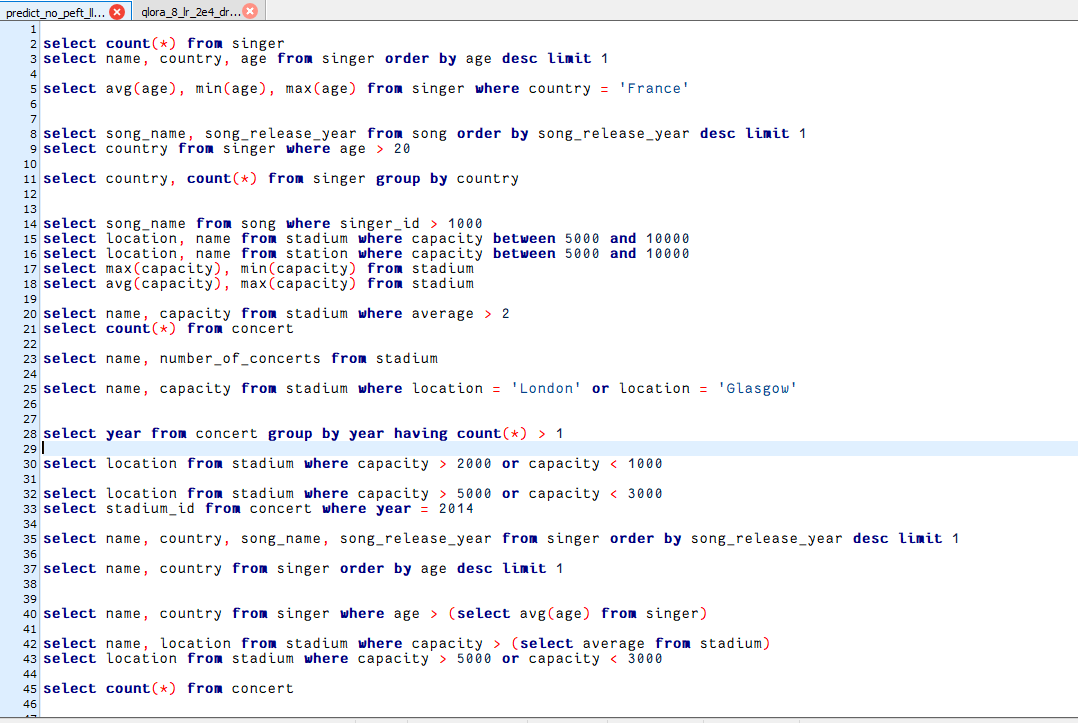

I used: train_qlora.py to fine-tuned the model for llama 2-7b, and then used get_predict_qlora.sh ( the checkpoint is 10000) to get the results, but many of the outputs are empty, as shown below:

resulting in poor results when executing: evaluation.py, as follows:

easy medium hard extra allcount 248 446 174 166 1034 compare etype exec ===================== EXECUTION ACCURACY ===================== execution 0.109 0.052 0.006 0.006 0.050

can you help me to check what went wrong?

I also did an experiment with 10,000 steps with lora, and the effect did not improve. Instead, there was a large drop in effect similar to yours. Now I am also puzzled by the description of a classmate in the issue who trained 10,000 steps based on the default parameters and got a big improvement based on qlora. So,It is not recommended that you use qlora to train 10,000 steps. We are preparing to release the results of recent experiments as soon as possible.In our exp ,the result is better when step smaller than 2500.

from db-gpt-hub.

when i use the chekpoint 2500, the result is :

easy medium hard extra all

count 248 446 174 166 1034

compare etype exec

===================== EXECUTION ACCURACY =====================

execution 0.194 0.076 0.029 0.006 0.085

what about yours? @wangzaistone

from db-gpt-hub.

Related Issues (20)

- Prompt for CodeLlama model HOT 1

- 网页刷新后 每个会话的模型选择恢复到默认模型 无法模型选择记忆化 HOT 1

- predict_sft.sh 推理速度好慢

- RuntimeError: expected mat1 and mat2 to have the same dtype, but got: float != c10::Half HOT 2

- codellama70B probably needs how much memory to train the spwider dataset?

- 在windows server上可以安装么? HOT 1

- 麻烦更新一下微信群的二维码,谢谢~

- 请问怎么自定义数据集 HOT 1

- 请问为什么合并模型的时候does not contain a a LORA weight HOT 2

- Can we support the sqlcoder-7b-2 HOT 1

- If the fine-tuned model could be used to DB-GPT? HOT 2

- torch.cuda.OutOfMemoryError HOT 1

- 请问支持在Mac M2机器上进行训练吗 HOT 1

- 请问如何使用中文数据集进行训练?

- Error reported when using Spark Model v3.5 to connect to DB-GPT HOT 1

- A40显卡微调Qwen1.5-7B-Chat报错:RuntimeError: mat1 and mat2 must have the same dtype, but got Float and BFloat16 HOT 2

- lora训练是不支持modules_to_save这个参数吗

- 微调之后的模型很小

- 我在加载数据集时,出现断言错误,请问如何解决?目前使用glm3模型,模型已经导入,目前排查出错在语句dataset = preprocess_dataset(dataset, tokenizer, data_args, training_args, ",sft")后续无法排查。

- 请问目前是仅支持lora和qlora微调吗,全参数微调后续会开放吗? HOT 1

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from db-gpt-hub.