Comments (30)

I finally managed to find a workaround for it:

- At first create a new Token under API & Keys (note down your Bearer Token)

- Copy your Kubeconfig from Rancher that is generated for you

- Change the "token" value

- Create a new Secret under "fleet-default" namespace in the "local" cluster (If you can't see it directly, go to namespaces in the Sytem project and then move the "fleet-default" namespace into the System Project). The Secret should be called as given by the error: "secrets "c-xyz123-kubeconfig" not found", so it should be called "c-xyz123-kubeconfig" the key is "value" and the value is the modified Kubeconfig

Note: you can directly use your generated Kubeconfig, but as it is shared with others, it is safer to create a new one, that can be revoked in case..

Now, fleet-controller should have the correct secret and start deploying fleet-agent on the cluster "c-xyz123".

But there is definitely a Bug, that prevents creation of this secret.

For us, 2 out of 4 clusters were automatically imported, while the others were not.

The main difference is, that the non working clusters were created a long time ago (shortly after Rancher 2 release).

from fleet.

The reason is absence of authn.management.cattle.io/kind=agent label on agent tokens for old clusters. So execute on local cluster kubectl label tokens agent-${agent user name} 'authn.management.cattle.io/kind=agent' and wait for rancher-operator to complete your cluster configuration (may be around 20 minutes). Agent user name can be found in kubectl get users -o custom-columns=NAME:.metadata.name,PrincipalIDs:.principalIds by pricipal system://${cluster name}.

see:

- https://github.com/rancher/rancher-operator/blob/v0.1.2/pkg/controllers/cluster/controller.go#L249

- https://github.com/rancher/rancher-operator/blob/v0.1.2/pkg/controllers/cluster/controller.go#L113

from fleet.

@gofrolist Yeah this has something to do with the kubeconfig that we generated for fleet to access fleet management plane. I suspect that your custom ca-certs has a bit blob of data which needs to be fit into secrets. We encode last applied spec into annotation and that's probably why it is breaking the limit.

I tested with minified cacerts.pem file where I put only required certs for our infrastructure and it works!

But I was initially confused because ca-bundle it's kind of default which comes with a package ca-certificates and where is no such limitation mentioned in documentation.

# repoquery -l ca-certificates | grep ca-bundle.crt

/etc/pki/tls/certs/ca-bundle.crt

from fleet.

It seems to me the issue persists.

Jun 12 23:19:07 ifa rancher-system-agent[38312]: time="2022-06-12T23:19:07+02:00" level=error msg="error while appending ca cert to pool for probe kube-controller-manager"

Jun 12 23:19:07 ifa rancher-system-agent[38312]: time="2022-06-12T23:19:07+02:00" level=error msg="error loading CA cert for probe (kube-scheduler) /var/lib/rancher/rke2/server/tls/kube-scheduler/kube-scheduler.crt: open /var/lib/rancher/rke2/server/tls/kube-scheduler/kube-scheduler.crt: no such file or directory"

Jun 12 23:19:07 ifa rancher-system-agent[38312]: time="2022-06-12T23:19:07+02:00" level=error msg="error while appending ca cert to pool for probe kube-scheduler"

Jun 12 23:19:11 ifa rancher-system-agent[38312]: time="2022-06-12T23:19:11+02:00" level=error msg="[K8s] received secret to process that was older than the last secret operated on. (16737291 vs 16737452)"

Jun 12 23:19:11 ifa rancher-system-agent[38312]: time="2022-06-12T23:19:11+02:00" level=error msg="error syncing 'fleet-default/custom-dd6e66f17cc7-machine-plan': handler secret-watch: secret received was too old, requeuing"

from fleet.

@jiaqiluo can you try with the latest master-head? I just deployed and retest this and it seems to be resolved.

from fleet.

rancher:master-1a82a4d3692140573e0bfa6fb46c5cf9757c0d83-head

see the same issue in the local cluster

from fleet.

This is not seen anymore on racnher:master-head, so close it for now.

from fleet.

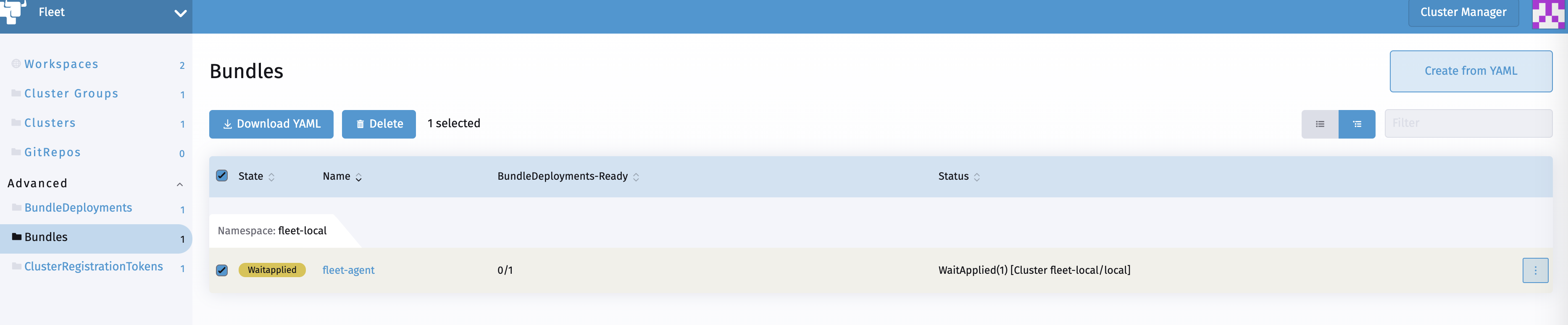

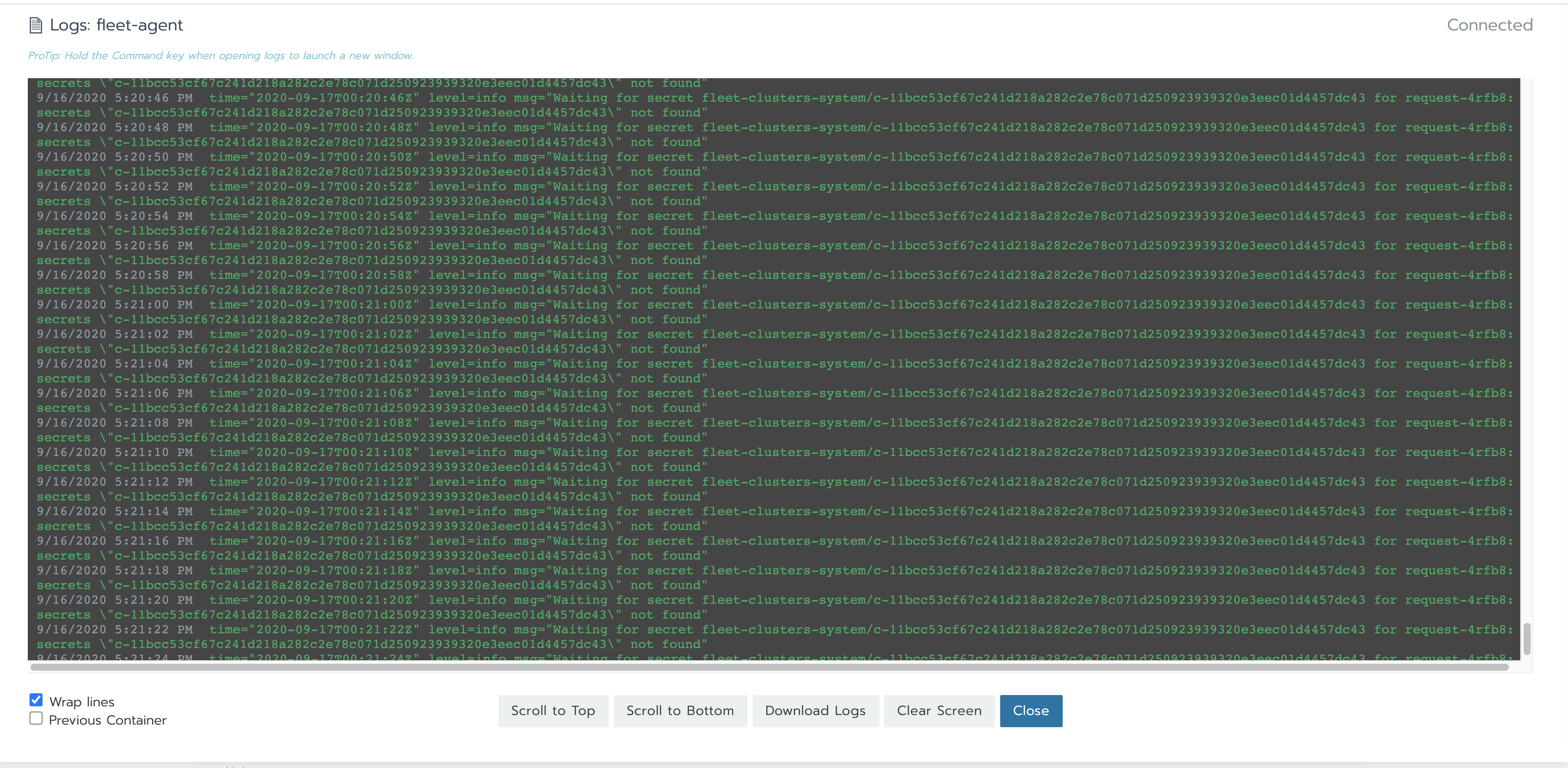

I have the same issue with Rancher v2.5.2 release.

secrets "c-2ft5x-kubeconfig" not found

logs from fleet-controller:

time="2020-11-13T20:20:12Z" level=info msg="No access to list CRDs, assuming CRDs are pre-created."

I1113 20:20:12.514326 1 leaderelection.go:242] attempting to acquire leader lease fleet-system/fleet-controller-lock...

I1113 20:20:12.518473 1 leaderelection.go:252] successfully acquired lease fleet-system/fleet-controller-lock

E1113 20:20:12.523846 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Bundle: the server could not find the requested resource (get bundles.meta.k8s.io)

E1113 20:20:12.523875 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Content: the server could not find the requested resource (get contents.meta.k8s.io)

E1113 20:20:12.526177 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterGroup: the server could not find the requested resource (get clustergroups.meta.k8s.io)

E1113 20:20:12.526287 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.GitRepo: the server could not find the requested resource (get gitrepos.meta.k8s.io)

E1113 20:20:12.526398 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterRegistrationToken: the server could not find the requested resource (get clusterregistrationtokens.meta.k8s.io)

E1113 20:20:12.527709 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.GitJob: the server could not find the requested resource (get gitjobs.meta.k8s.io)

E1113 20:20:12.529007 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.BundleNamespaceMapping: the server could not find the requested resource (get bundlenamespacemappings.meta.k8s.io)

E1113 20:20:12.537730 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterRegistration: the server could not find the requested resource (get clusterregistrations.meta.k8s.io)

E1113 20:20:12.550328 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Cluster: the server could not find the requested resource (get clusters.meta.k8s.io)

E1113 20:20:12.553010 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.BundleDeployment: the server could not find the requested resource (get bundledeployments.meta.k8s.io)

E1113 20:20:12.554374 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.GitRepoRestriction: the server could not find the requested resource (get gitreporestrictions.meta.k8s.io)

E1113 20:20:13.509753 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.GitRepo: the server could not find the requested resource (get gitrepos.meta.k8s.io)

E1113 20:20:13.526283 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.GitJob: the server could not find the requested resource (get gitjobs.meta.k8s.io)

E1113 20:20:13.565483 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Bundle: the server could not find the requested resource (get bundles.meta.k8s.io)

E1113 20:20:13.602353 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Cluster: the server could not find the requested resource (get clusters.meta.k8s.io)

E1113 20:20:13.606585 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.BundleDeployment: the server could not find the requested resource (get bundledeployments.meta.k8s.io)

E1113 20:20:13.654185 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.BundleNamespaceMapping: the server could not find the requested resource (get bundlenamespacemappings.meta.k8s.io)

E1113 20:20:13.654321 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterRegistrationToken: the server could not find the requested resource (get clusterregistrationtokens.meta.k8s.io)

E1113 20:20:13.737937 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Content: the server could not find the requested resource (get contents.meta.k8s.io)

E1113 20:20:13.972256 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.GitRepoRestriction: the server could not find the requested resource (get gitreporestrictions.meta.k8s.io)

E1113 20:20:13.977728 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterGroup: the server could not find the requested resource (get clustergroups.meta.k8s.io)

E1113 20:20:14.056618 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterRegistration: the server could not find the requested resource (get clusterregistrations.meta.k8s.io)

E1113 20:20:15.248786 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Cluster: the server could not find the requested resource (get clusters.meta.k8s.io)

E1113 20:20:15.460920 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.BundleDeployment: the server could not find the requested resource (get bundledeployments.meta.k8s.io)

E1113 20:20:15.465717 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Content: the server could not find the requested resource (get contents.meta.k8s.io)

E1113 20:20:15.673136 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterGroup: the server could not find the requested resource (get clustergroups.meta.k8s.io)

E1113 20:20:16.004653 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Bundle: the server could not find the requested resource (get bundles.meta.k8s.io)

E1113 20:20:16.226803 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.BundleNamespaceMapping: the server could not find the requested resource (get bundlenamespacemappings.meta.k8s.io)

E1113 20:20:16.241715 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.GitJob: the server could not find the requested resource (get gitjobs.meta.k8s.io)

E1113 20:20:16.495231 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.GitRepo: the server could not find the requested resource (get gitrepos.meta.k8s.io)

E1113 20:20:16.524917 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.GitRepoRestriction: the server could not find the requested resource (get gitreporestrictions.meta.k8s.io)

E1113 20:20:16.764901 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterRegistration: the server could not find the requested resource (get clusterregistrations.meta.k8s.io)

E1113 20:20:16.816574 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterRegistrationToken: the server could not find the requested resource (get clusterregistrationtokens.meta.k8s.io)

time="2020-11-13T20:20:23Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=ClusterRegistrationToken controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting rbac.authorization.k8s.io/v1, Kind=Role controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting rbac.authorization.k8s.io/v1, Kind=ClusterRoleBinding controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting rbac.authorization.k8s.io/v1, Kind=RoleBinding controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=Content controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=GitRepo controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=ClusterGroup controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=Cluster controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting rbac.authorization.k8s.io/v1, Kind=ClusterRole controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=BundleNamespaceMapping controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting /v1, Kind=ConfigMap controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting /v1, Kind=Secret controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=ClusterRegistration controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting gitjob.cattle.io/v1, Kind=GitJob controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=GitRepoRestriction controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=Bundle controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting /v1, Kind=Namespace controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=BundleDeployment controller"

time="2020-11-13T20:20:23Z" level=info msg="Starting /v1, Kind=ServiceAccount controller"

time="2020-11-13T20:20:23Z" level=info msg="All controllers have been started"

time="2020-11-13T20:20:24Z" level=info msg="Deployed new agent for cluster fleet-local/local"

time="2020-11-13T20:20:24Z" level=info msg="Deleted old agent for cluster fleet-local/local"

time="2020-11-13T20:20:24Z" level=info msg="Deployed new agent for cluster fleet-local/local"

time="2020-11-13T20:20:30Z" level=info msg="Cluster registration fleet-local/request-4d4lh, cluster fleet-local/local granted [false]"

time="2020-11-13T20:20:30Z" level=info msg="Cluster registration fleet-local/request-4d4lh, cluster fleet-local/local granted [true]"

time="2020-11-13T20:56:19Z" level=error msg="error syncing 'fleet-default/c-2ft5x': handler import-cluster: secrets \"c-2ft5x-kubeconfig\" not found, requeuing"

time="2020-11-13T20:56:19Z" level=error msg="error syncing 'fleet-default/c-2ft5x': handler import-cluster: secrets \"c-2ft5x-kubeconfig\" not found, requeuing"

time="2020-11-13T20:56:19Z" level=error msg="error syncing 'fleet-default/c-2ft5x': handler import-cluster: secrets \"c-2ft5x-kubeconfig\" not found, requeuing"

from fleet.

@gofrolist Can you also post logs from rancher-operator pod in rancher-operator-system namespace?

from fleet.

kubectl logs rancher-operator-5f4494c5b4-f25jv --all-containers=true -n rancher-operator-system

time="2020-11-13T20:19:50Z" level=info msg="Starting controller"

I1113 20:19:50.375347 1 leaderelection.go:242] attempting to acquire leader lease kube-system/rancher-controller-lock...

I1113 20:19:50.393043 1 leaderelection.go:252] successfully acquired lease kube-system/rancher-controller-lock

time="2020-11-13T20:19:50Z" level=info msg="No access to list CRDs, assuming CRDs are pre-created."

time="2020-11-13T20:19:50Z" level=info msg="Starting /v1, Kind=Secret controller"

time="2020-11-13T20:19:50Z" level=info msg="Starting /v1, Kind=Namespace controller"

time="2020-11-13T20:19:50Z" level=info msg="Starting apps/v1, Kind=Deployment controller"

time="2020-11-13T20:19:50Z" level=info msg="Starting apps/v1, Kind=DaemonSet controller"

E1113 20:19:50.545264 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.RoleTemplateBinding: the server could not find the requested resource (get roletemplatebindings.meta.k8s.io)

E1113 20:19:50.545588 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.RoleTemplate: the server could not find the requested resource (get roletemplates.meta.k8s.io)

E1113 20:19:50.545646 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.Cluster: the server could not find the requested resource (get clusters.meta.k8s.io)

E1113 20:19:50.545779 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.Project: the server could not find the requested resource (get projects.meta.k8s.io)

E1113 20:19:50.545829 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterGroup: the server could not find the requested resource (get clustergroups.meta.k8s.io)

E1113 20:19:50.547661 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterRegistrationToken: the server could not find the requested resource (get clusterregistrationtokens.meta.k8s.io)

E1113 20:19:50.547731 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Cluster: the server could not find the requested resource (get clusters.meta.k8s.io)

E1113 20:19:50.547766 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.GitRepo: the server could not find the requested resource (get gitrepos.meta.k8s.io)

E1113 20:19:51.401097 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.RoleTemplate: the server could not find the requested resource (get roletemplates.meta.k8s.io)

E1113 20:19:51.532852 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.GitRepo: the server could not find the requested resource (get gitrepos.meta.k8s.io)

E1113 20:19:51.543920 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Cluster: the server could not find the requested resource (get clusters.meta.k8s.io)

E1113 20:19:51.600746 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterGroup: the server could not find the requested resource (get clustergroups.meta.k8s.io)

E1113 20:19:51.601162 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.Cluster: the server could not find the requested resource (get clusters.meta.k8s.io)

E1113 20:19:51.617727 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.Project: the server could not find the requested resource (get projects.meta.k8s.io)

E1113 20:19:51.895689 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.RoleTemplateBinding: the server could not find the requested resource (get roletemplatebindings.meta.k8s.io)

E1113 20:19:51.911358 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterRegistrationToken: the server could not find the requested resource (get clusterregistrationtokens.meta.k8s.io)

E1113 20:19:53.533237 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.Cluster: the server could not find the requested resource (get clusters.meta.k8s.io)

E1113 20:19:53.602685 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.GitRepo: the server could not find the requested resource (get gitrepos.meta.k8s.io)

E1113 20:19:53.619977 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Cluster: the server could not find the requested resource (get clusters.meta.k8s.io)

E1113 20:19:54.350485 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterRegistrationToken: the server could not find the requested resource (get clusterregistrationtokens.meta.k8s.io)

E1113 20:19:54.382667 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.RoleTemplate: the server could not find the requested resource (get roletemplates.meta.k8s.io)

E1113 20:19:54.405574 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterGroup: the server could not find the requested resource (get clustergroups.meta.k8s.io)

E1113 20:19:54.603166 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.Project: the server could not find the requested resource (get projects.meta.k8s.io)

E1113 20:19:54.611169 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.RoleTemplateBinding: the server could not find the requested resource (get roletemplatebindings.meta.k8s.io)

E1113 20:19:56.824957 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.Cluster: the server could not find the requested resource (get clusters.meta.k8s.io)

E1113 20:19:57.310447 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.GitRepo: the server could not find the requested resource (get gitrepos.meta.k8s.io)

E1113 20:19:57.837930 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.RoleTemplate: the server could not find the requested resource (get roletemplates.meta.k8s.io)

E1113 20:19:57.993252 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.Project: the server could not find the requested resource (get projects.meta.k8s.io)

E1113 20:19:58.764227 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Cluster: the server could not find the requested resource (get clusters.meta.k8s.io)

E1113 20:19:59.509945 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterGroup: the server could not find the requested resource (get clustergroups.meta.k8s.io)

E1113 20:20:00.026705 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1.RoleTemplateBinding: the server could not find the requested resource (get roletemplatebindings.meta.k8s.io)

E1113 20:20:00.672235 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterRegistrationToken: the server could not find the requested resource (get clusterregistrationtokens.meta.k8s.io)

E1113 20:20:04.820360 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.GitRepo: the server could not find the requested resource (get gitrepos.meta.k8s.io)

E1113 20:20:06.947592 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.Cluster: the server could not find the requested resource (get clusters.meta.k8s.io)

E1113 20:20:08.619042 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterGroup: the server could not find the requested resource (get clustergroups.meta.k8s.io)

E1113 20:20:08.695866 1 reflector.go:178] pkg/mod/github.com/rancher/[email protected]/tools/cache/reflector.go:125: Failed to list *v1alpha1.ClusterRegistrationToken: the server could not find the requested resource (get clusterregistrationtokens.meta.k8s.io)

time="2020-11-13T20:20:32Z" level=info msg="Starting rancher.cattle.io/v1, Kind=Cluster controller"

time="2020-11-13T20:20:32Z" level=info msg="Starting rancher.cattle.io/v1, Kind=Project controller"

time="2020-11-13T20:20:32Z" level=info msg="Starting rancher.cattle.io/v1, Kind=RoleTemplateBinding controller"

time="2020-11-13T20:20:32Z" level=info msg="Starting rancher.cattle.io/v1, Kind=RoleTemplate controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting management.cattle.io/v3, Kind=Project controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting management.cattle.io/v3, Kind=ClusterRoleTemplateBinding controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting management.cattle.io/v3, Kind=User controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting management.cattle.io/v3, Kind=Token controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting management.cattle.io/v3, Kind=FleetWorkspace controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting management.cattle.io/v3, Kind=Cluster controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting management.cattle.io/v3, Kind=Setting controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting management.cattle.io/v3, Kind=ClusterRegistrationToken controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting management.cattle.io/v3, Kind=ProjectRoleTemplateBinding controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting management.cattle.io/v3, Kind=RoleTemplate controller"

time="2020-11-13T20:20:33Z" level=info msg="All controllers are started"

time="2020-11-13T20:20:33Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=ClusterRegistrationToken controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=Cluster controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=ClusterGroup controller"

time="2020-11-13T20:20:33Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=GitRepo controller"

time="2020-11-13T20:20:33Z" level=error msg="error syncing 'fleet-local/local': handler workspace-backport: fleetworkspaces.management.cattle.io \"fleet-local\" not found, requeuing"

time="2020-11-13T20:56:19Z" level=error msg="error syncing 'fleet-default/c-2ft5x': handler cluster-create: failed to create fleet-default/c-2ft5x-kubeconfig /v1, Kind=Secret for cluster-create fleet-default/c-2ft5x: Secret \"c-2ft5x-kubeconfig\" is invalid: metadata.annotations: Too long: must have at most 262144 bytes, requeuing"

time="2020-11-13T20:56:20Z" level=error msg="error syncing 'fleet-default/c-2ft5x': handler cluster-create: failed to create fleet-default/c-2ft5x-kubeconfig /v1, Kind=Secret for cluster-create fleet-default/c-2ft5x: Secret \"c-2ft5x-kubeconfig\" is invalid: metadata.annotations: Too long: must have at most 262144 bytes, requeuing"

time="2020-11-13T20:56:20Z" level=error msg="error syncing 'fleet-default/c-2ft5x': handler cluster-create: failed to create fleet-default/c-2ft5x-kubeconfig /v1, Kind=Secret for cluster-create fleet-default/c-2ft5x: Secret \"c-2ft5x-kubeconfig\" is invalid: metadata.annotations: Too long: must have at most 262144 bytes, requeuing"

ault/c-2ft5x': handler cluster-create: failed to create fleet-default/c-2ft5x-kubeconfig /v1, Kind=Secret for cluster-create fleet-default/c-2ft5x: Secret \"c-2ft5x-kubeconfig\" is invalid: metadata.annotations: Too long: must have at most 262144 bytes, requeuing"

time="2020-11-16T21:31:48Z" level=error msg="error syncing 'fleet-default/c-2ft5x': handler cluster-create: failed to create fleet-default/c-2ft5x-kubeconfig /v1, Kind=Secret for cluster-create fleet-default/c-2ft5x: Secret \"c-2ft5x-kubeconfig\" is invalid: metadata.annotations: Too long: must have at most 262144 bytes, requeuing"

from fleet.

I will reopen this issue to track. Looks like a bug that we didn't handle

from fleet.

Probably this is because I mount our own ca-bundle. I did that following this article custom-ca-certificate

-v /etc/pki/tls/certs/ca-bundle.crt:/etc/rancher/ssl/cacerts.pem -e SSL_CERT_DIR=/etc/rancher/ssl

from fleet.

@gofrolist Yeah this has something to do with the kubeconfig that we generated for fleet to access fleet management plane. I suspect that your custom ca-certs has a bit blob of data which needs to be fit into secrets. We encode last applied spec into annotation and that's probably why it is breaking the limit.

from fleet.

Good to hear, this is indeed an issue on our side that needs to be addressed.

from fleet.

@gofrolist what version of k8s you are on?

from fleet.

@gofrolist what version of k8s you are on?

But I also tried v.1.18.10 and had the same issue. I believe this is not related to k8s version itself but rancher config

from fleet.

same issues, any workaround ?

from fleet.

same issues, any workaround ?

Check size of your kubeconfig and try to minify cacerts file if you have it mounted into rancher container

from fleet.

rancher is install in k3s, fleet-local works, but add another cluster, this error showed up, no custom ca

from fleet.

same issue here. I have a multi cluster setup with Rancher server in EKS (1.16) and the target cluster also in EKS (1.16).

This is the error message from rancher-operator

time="2020-11-27T11:52:39Z" level=error msg="error syncing 'fleet-default/c-gdq7v': handler cluster-create: secrets \"tls-rancher-internal-ca\" not found, requeuing"

Let me know if you need more info

from fleet.

My problem was solved, seems I delete the ca by accident. After reinstall rancher, everything is working now.

from fleet.

experiencing the same issue with the missing secret. Is there anyway to manually force missing secret creation? tried erasing clusters.fleet.cattle.io from fleet-default, it got automatically re-added, but still missing the kubeconfig secret.

❯ kl -f rancher-operator-dc6876565-ffdwx ─╯

time="2020-12-08T20:15:25Z" level=info msg="Starting controller"

I1208 20:15:25.751103 1 leaderelection.go:242] attempting to acquire leader lease kube-system/rancher-controller-lock...

I1208 20:15:25.776765 1 leaderelection.go:252] successfully acquired lease kube-system/rancher-controller-lock

time="2020-12-08T20:15:25Z" level=info msg="No access to list CRDs, assuming CRDs are pre-created."

E1208 20:15:25.997845 1 memcache.go:206] couldn't get resource list for metrics.k8s.io/v1beta1: the server is currently unable to handle the request

E1208 20:15:26.017683 1 memcache.go:111] couldn't get resource list for metrics.k8s.io/v1beta1: the server is currently unable to handle the request

time="2020-12-08T20:15:26Z" level=info msg="Starting /v1, Kind=Secret controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting /v1, Kind=Namespace controller"

E1208 20:15:26.039156 1 memcache.go:206] couldn't get resource list for metrics.k8s.io/v1beta1: the server is currently unable to handle the request

E1208 20:15:26.051803 1 memcache.go:111] couldn't get resource list for metrics.k8s.io/v1beta1: the server is currently unable to handle the request

time="2020-12-08T20:15:26Z" level=info msg="Starting apps/v1, Kind=DaemonSet controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting apps/v1, Kind=Deployment controller"

E1208 20:15:26.268632 1 memcache.go:206] couldn't get resource list for metrics.k8s.io/v1beta1: the server is currently unable to handle the request

E1208 20:15:26.276909 1 memcache.go:111] couldn't get resource list for metrics.k8s.io/v1beta1: the server is currently unable to handle the request

time="2020-12-08T20:15:26Z" level=info msg="Starting rancher.cattle.io/v1, Kind=Cluster controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting rancher.cattle.io/v1, Kind=RoleTemplateBinding controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting rancher.cattle.io/v1, Kind=Project controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting rancher.cattle.io/v1, Kind=RoleTemplate controller"

time="2020-12-08T20:15:26Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

E1208 20:15:26.295772 1 memcache.go:206] couldn't get resource list for metrics.k8s.io/v1beta1: the server is currently unable to handle the request

time="2020-12-08T20:15:26Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:26Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:26Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

E1208 20:15:26.380832 1 memcache.go:111] couldn't get resource list for metrics.k8s.io/v1beta1: the server is currently unable to handle the request

time="2020-12-08T20:15:26Z" level=info msg="Starting management.cattle.io/v3, Kind=Project controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting management.cattle.io/v3, Kind=ClusterRoleTemplateBinding controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting management.cattle.io/v3, Kind=FleetWorkspace controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting management.cattle.io/v3, Kind=Cluster controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting management.cattle.io/v3, Kind=Setting controller"

E1208 20:15:26.432296 1 memcache.go:206] couldn't get resource list for metrics.k8s.io/v1beta1: the server is currently unable to handle the request

time="2020-12-08T20:15:26Z" level=info msg="Starting management.cattle.io/v3, Kind=Token controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting management.cattle.io/v3, Kind=User controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting management.cattle.io/v3, Kind=ProjectRoleTemplateBinding controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting management.cattle.io/v3, Kind=ClusterRegistrationToken controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting management.cattle.io/v3, Kind=RoleTemplate controller"

time="2020-12-08T20:15:26Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

E1208 20:15:26.456033 1 memcache.go:111] couldn't get resource list for metrics.k8s.io/v1beta1: the server is currently unable to handle the request

time="2020-12-08T20:15:26Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:26Z" level=info msg="All controllers are started"

time="2020-12-08T20:15:26Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=ClusterRegistrationToken controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=Cluster controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=ClusterGroup controller"

time="2020-12-08T20:15:26Z" level=info msg="Starting fleet.cattle.io/v1alpha1, Kind=GitRepo controller"

time="2020-12-08T20:15:26Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:27Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:28Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:52Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:52Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:52Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:52Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:52Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:52Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:52Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:53Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:54Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:15:57Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:16:02Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:16:12Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:17:11Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:17:11Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:17:11Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:17:11Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:17:11Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:17:12Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:17:12Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:17:14Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:17:16Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:17:21Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:17:32Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:18:54Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:24:21Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:35:17Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T20:51:57Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T21:08:37Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T21:25:17Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

time="2020-12-08T21:41:57Z" level=error msg="error syncing 'fleet-default/c-p4bpn': handler cluster-create: failed to find token for cluster c-p4bpn, requeuing"

from fleet.

Thanks! Exactly on the last point, the 1 cluster that didn't work for me was my very first cluster... using rancher 2.2 I think in my case.

from fleet.

This PR introduced labels to all the tokens. The fact that it won't label the existing token make rancher-operator failed to find the token.

from fleet.

We're seeing this too but I think this is because our upgrade from k8s 1.17 to 1.19 failed and we rolled back. This meant that various rancher related agents were rolled back as well. We saw this with the cattle-node-agent and cattle-cluster-agent deployments.

What's the best way to get these clusters re-added? Should we follow the manual steps provided in #83 (comment)? I'm hesitant to use a token created for my user for the fleet agent, it looks like the tokens for our still-working cluster uses the user agent-u-{junk}. I would try to clone this user but I'm not sure how best to generate some aspects of this kubeconfig.

It seems clear that the steps in #83 (comment) will lead to some issues. The agent token we do have points at https://rancher.cattle-system rather than Rancher's public URL for example.

from fleet.

Available to test with rancher-operator v0.1.3-rc5

from fleet.

@Ellenqs You can do the folllowing setup:

- Setup a latest 2.2.x cluster. Add one or two cluster.

- Upgrade setup to 2.5.5. Go to contious delivery and see if cluster goes to error state and failed to register.

- Then upgrade setup to 2.5-head(or latest rc of 2.5.6), check rancher-operator version(should be v0.1.3-rc5) then verify if this issue is fixed.

from fleet.

The reason is absence of

authn.management.cattle.io/kind=agentlabel on agent tokens for old clusters. So execute on local clusterkubectl label tokens agent-${agent user name} 'authn.management.cattle.io/kind=agent'and wait forrancher-operatorto complete your cluster configuration (may be around 20 minutes). Agent user name can be found inkubectl get users -o custom-columns=NAME:.metadata.name,PrincipalIDs:.principalIdsby pricipalsystem://${cluster name}.see:

* https://github.com/rancher/rancher-operator/blob/v0.1.2/pkg/controllers/cluster/controller.go#L249 * https://github.com/rancher/rancher-operator/blob/v0.1.2/pkg/controllers/cluster/controller.go#L113

This worked for us with old clusters. Found the process was sped up by assigning a cluster to fleet-local then back to fleet-default Workspace after performing the above steps.

from fleet.

@Ellenqs You can do the folllowing setup:

- Setup a latest 2.2.x cluster. Add one or two cluster.

- Upgrade setup to 2.5.5. Go to contious delivery and see if cluster goes to error state and failed to register.

- Then upgrade setup to 2.5-head(or latest rc of 2.5.6), check rancher-operator version(should be v0.1.3-rc5) then verify if this issue is fixed.

Validated this on Rancher HA setup.

Steps and results

- Deploy rancher HA setup version

v2.2.13with cert-manager versionv0.15.0. - Create 2 downstream clusters (cls1, cls2 ) on rancher

v2.2.13.

-

Upgrade rancher from

v2.2.13tov2.5.5using helm upgrade.After rancher is upgraded succesfully, the k8s version of local cluster is

v1.15, rancher operator version isv0.1.200, in cluster explorer -> continuous delivery -> clusters, we can see the bug is reproduced.

Because the k8s version of the local cluster is too old, rancher-webhook failed to install. So upgrade k8s version of local cluster .

- Upgrade the k8s version of the local cluster with RKE CLI v1.2.4.

Edit thecluser.ymlfile to change kubernetes_version tov1.19.6-rancher1-1.

Use RKE CLI v1.2.4 to run rke up.

The k8s version of local cluster is upgraded tov1.19.

- Upgrade Rancher to v2.5.6-rc4.

After rancher is upgraded succesfully, check the rancher operator is stillv0.1.2.00. Check the cluster status, two clusters are still in error state.

- Update rancher catalog's branch to point to dev-v2.5, wait for 10-15 minutes, check the rancher operator is updated to

v0.1.3.00, go to continuous delivery -> clusters, there is no error any more, the clusters are in active state.

from fleet.

Hello All,

We are still seeing this error after upgrading to rancher v2.5.7 and k8s to v1.18.10, The cluster-operator is taking more than 20mins of time to come up which delayed in fleet-agent deployment in downstream cluster. We are running 2000+ cluster if each cluster is taking approx 20mins - 30mins of time then how to difficult to manage the deployments through fleet.

Can we have any alertnatives or workaround to speed of fleet registration process? what is so specific with 20 mins?

from fleet.

Related Issues (20)

- Helm repo stored in OCI is not able to be downloaded by fleet HOT 8

- Dev Doc On How To Do Logging

- Investigate available golangci-lint linters

- How to increase bundles deployment concurrency of fleet agent HOT 3

- .values.apiServerUrl helm chart setting ignored HOT 2

- Panic when fleet agent trying to locate fleet-agent secret HOT 1

- v0.9.1 - PR: #2031 - agent cpu usage explodes HOT 5

- namespaceLabels does not work as a targetCustomizations HOT 3

- Feature Request: Support harvester-baremetal-container-workload clusters HOT 1

- Support `insecureSkipTLSVerify` for private helm oci registries HOT 3

- Documentation: How to escape the templating in fleet.yaml HOT 2

- Maintenance bump OS images fleet/gitjob 0.8 HOT 1

- Maintenance bump OS images fleet/gitjob 0.9 HOT 2

- Add metrics config to rancher-monitoring chart

- metrics: gauge the number of Paused resources HOT 1

- metrics: Add E2E test for cluster deletion

- Nodes status not ready for Fleet in Rancher 2.9-head HOT 3

- Imagescan job should only write to the git repository, when there are changes HOT 1

- Merge gitjob binary into fleetcontroller binary

- Gitjob complains that `Operation cannot be fulfilled on gitrepos.fleet.cattle.io` HOT 2

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from fleet.