Comments (6)

@SimonBlanke

OK! I now understand the operation principle of Hyperactive.

Thank you for your patience and quick response!

from hyperactive.

Hello @a7258258,

the number of iterations n_iter is always equal to the number of times the objective function is called, which runs the model inside. So if running your model once takes 2 minutes the entire optimization run would take ~20 minutes (for n_iter==10).

The population of the optimization algorithm does not affect the number of iterations or function calls. If the population is equal to 10 and n_iter is 100, then each particle will do 10 steps. So each particle gets an equal share of n_iter.

You should also take into account that the initialize-parameter will select 10 init-positions per default. So the first 10 iterations are a mix of random, grid and vertex positions in the search-space. No particle-swarm-optimization is taking place in your example. After the initial iterations the particle-swarm-optimization starts by creating the particles at the best initial positions found.

I hope I was able to help you. If you need further explanations just let me know.

from hyperactive.

@SimonBlanke

Thank you for your response, but I still don't understand the definition of population. In the PSO algorithm, each particle calculates the Fitness Value and then determines its next movement direction.

For hyperparameter optimization tasks, each particle runs a model to calculate the accuracy (Fitness Value) and then determines its movement direction. Therefore, if I set the number of particles to 5, in one iteration, five models should calculate the accuracy and iterate n_iter times.

Later, I realized that 'initialize' will select 10 init-positions per, so I set n_iter to 100, but it still seems like only one particle iterates 100 times and runs 100 models, which is not in line with my understanding

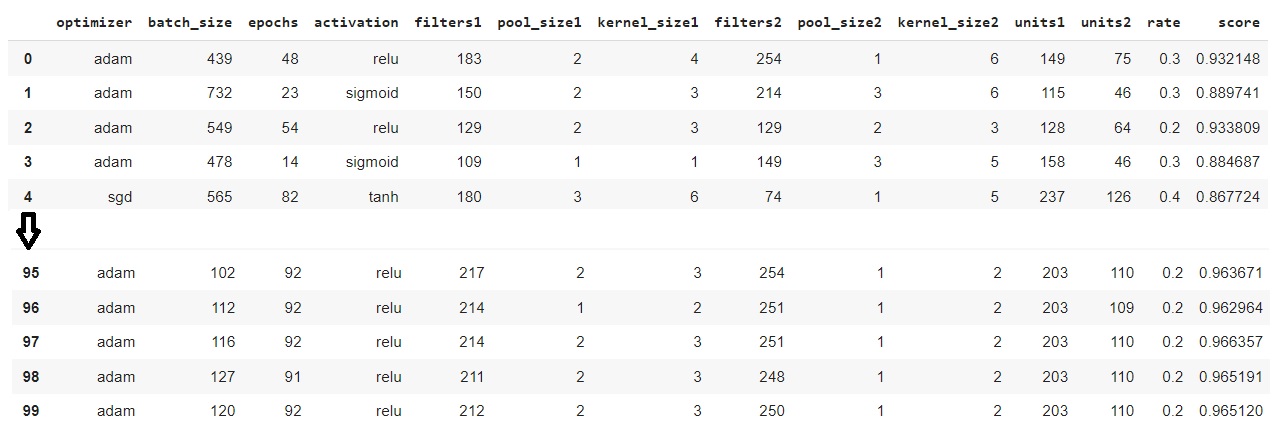

By the way, I actually started using the algorithm from LDWPSO-CNN. In his method, I can set the number of particles n_part, and the results are as I previously described, with the number of particles multiplied by the number of iterations equal to the total number of models run. However, there seems to be a memory leak problem, and it crashed after 150 iterations. I saw that he used Hyperactive, so I found this.

from hyperactive.

Hello @a7258258,

it would be easier for me if you ask specific questions.

Thank you for your response, but I still don't understand the definition of population.

The population parameter is just an integer that determines the number of particles.

In the PSO algorithm, each particle calculates the Fitness Value and then determines its next movement direction.

The fitness value is not calculated within the particles-class, but is passed to it in each iteration. The particle does calculate the movement, though.

For hyperparameter optimization tasks, each particle runs a model to calculate the accuracy (Fitness Value) and then determines its movement direction. Therefore, if I set the number of particles to 5, in one iteration, five models should calculate the accuracy and iterate n_iter times.

This is not how PSO works in Hyperactive. I had to unify the API for all kinds of optimization algorithms, so n_iter has to mean the same for all algorithms.

but it still seems like only one particle iterates 100 times and runs 100 models, which is not in line with my understanding

How did you come to this conclusion?

By the way, I actually started using the algorithm from LDWPSO-CNN.

I remember this project from years ago, but I am not aware how it works.

However, there seems to be a memory leak problem, and it crashed after 150 iterations.

If you are able to reproduce this error in Hyperactive you can open a bug-issue here.

from hyperactive.

@SimonBlanke

I'm sorry, maybe I didn't express myself clearly. I'll restate my question and provide an example to explain.

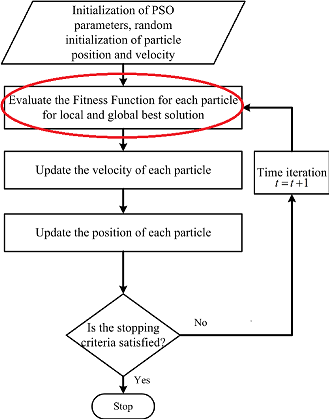

From the flowchart of the PSO algorithm, there is a step that says "Evaluate the Fitness Function for each particle".

Based on this, each particle represents a set of model hyperparameters, and the fitness value is used to evaluate the each set model of hyperparameters, So I think that all particles will train the model and get the fitness value in a single iteration and the movement direction of each particle in the next iteration will be determined.

As I mentioned before, I think population x n_iter = "Total number of models searched"

So, I'm confused about why only one model is trained in each iteration, even when the population is set to 5,

why aren't all 5 particles trained model in one iteration?

When I set the population to 5 and n_iter to 100, I noticed that during the experiment that only one model was trained in one iteration before moving on to the next iteration. And as the final result shows, It only trained 100 models to searched 100 sets of hyperparameters, and the population seems to have no effect. That's why I think it's just one particle going through 100 iterations.

I hope this description helps you understand my question.

from hyperactive.

Hell @a7258258,

thank you for the detailed reply! :-)

So, I'm confused about why only one model is trained in each iteration, even when the population is set to 5,

why aren't all 5 particles trained model in one iteration?

This is just a "design-choice" for my software to make sure, that 1 iteration always means the same (for PSO, hill-climbing or even bayesian-optimization). One model is trained in each iteration, because I intentionally implemented it that way. But this does not change the behaviour of the algorithm. However it does open up a way to do stuff after each particles receives the new score, like:

- early stopping

- updating the progress-bar

- tracking the score and positions

I designed the api that way to improve the "user-experience". If you are done with your work with pso you can just switch to another algorithm without relearning how Hyperactive works.

The PSO in Hyperactive will cycle through the population of particles one after another. So each particle gets the model evaluation and moves to a new position. So one "iteration" (from n_iter) is just a definition in Hyperactive as:

- find a new position

- evaluate new position

- update progress-bar

- track data

- ...

You could also call it a trial instead of an iteration.

If you want to understand the inner workings of the PSO you can take a look at the optimization backend of Hyperactive. In that repository you can also see the tests for the optimization-algorithms that make sure, that the algorithms work as intended.

I also created a gif that shows the path each particles takes through a 2D-search-space (based on the saved position and score information in each particle):

from hyperactive.

Related Issues (20)

- ValueError: assignment destination is read-only HOT 3

- Dynamic inertia in ParticleSwarmOptimizer HOT 4

- Feature: Passing extra parameters to the optimization function HOT 5

- Optimization in serial? HOT 4

- New feature: save optimizer object to continue optimization run at a later time.

- hyper.results(model) HOT 1

- New feature: Optimization Strategies HOT 1

- add ray multiprocessing support

- Change Optimization paramters at runtime

- Show speed difference between python version

- Progress Bar visual error when running in parallel HOT 2

- Error when creating shared memory HOT 1

- TypeError: cannot pickle '_thread.RLock' object HOT 5

- Redesign command-line output of optimization run HOT 1

- Add early stopping feature to custom optimization strategies HOT 1

- Add type hints to hyperactive-api HOT 1

- add `prune_search_space`-method to optimization strategies HOT 1

- add constrained optimization to API HOT 1

- Stop verbosity of search HOT 3

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from hyperactive.