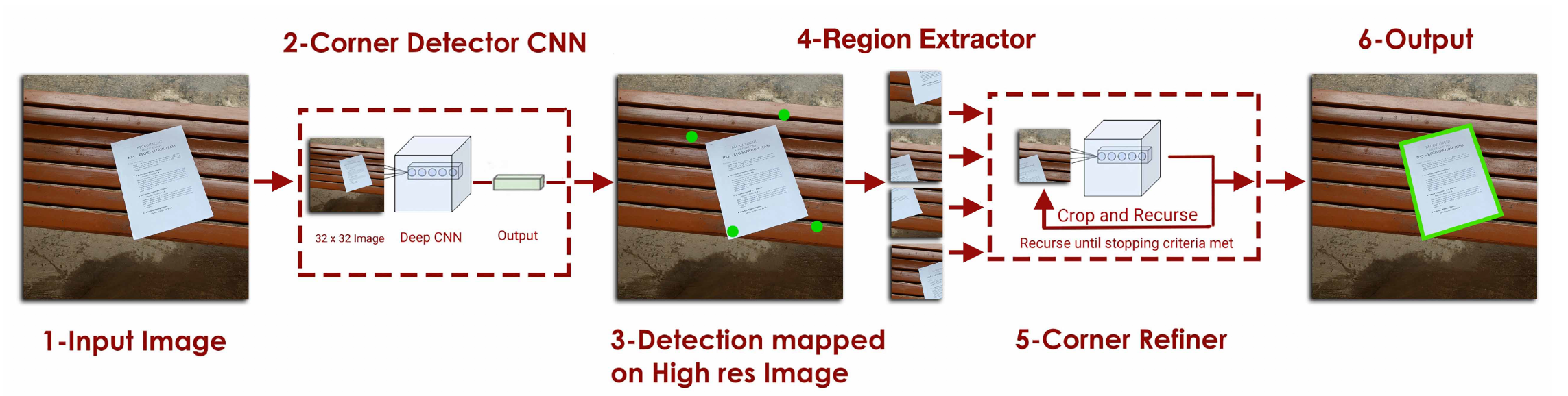

Khurram Javed, Faisal Shaifait "Real-time Document Localization in Natural Images by Recursive Application of a CNN"

Paper available at : https://khurramjaved96.github.io

- Install Tensorflow >= 1.8.0

pip install -r requirements.txt-

Pre-requisite:

- Tensorflow >= 1.8.0

- Python3.6

- Numpy

- SciPy

- Opencv 4.0.0.21 for Python

-

clone the source code

git clone -b server_branch https://github.com/xiaoyubing/Recursive-CNNs.gitTo test the system, you can use the pretrained models by:

usage: python detectDocument.py [-i IMAGEPATH] [-o OUTPUTPATH]

[-rf RETAINFACTOR] [-cm CORNERMODEL]

[-dm DOCUMENTMODEL]For example:

python detectDocument.py -i TrainedModel/img.jpg -o TrainedModel/result.jpg -rf 0.85would run the pretrained model on the sample image in the repository.

SmartDoc Competition 2 dataset : https://sites.google.com/site/icdar15smartdoc/challenge-1/challenge1dataset Self-collected dataset : https://drive.google.com/drive/folders/0B9Sr0v9WkqCmekhjTTY2aV9hUmM?usp=sharing

Training code is mostly for reference only. It's not well documented or commented and it would be easier to re-implement the model from the paper than using this code. However I will be refactoring the code in the coming days to make it more accesible.

To prepare dataset for training, run the following command following:

python video_to_image.py --d ../path_to_smartdoc_videos/ --o ../path_to_store_frameshere video_to_image.py is in the SmartDocDataProcessor folder.

After converting to videos to frames, we need to convert the data into format required to train the models. We have to train two models. One to detect the four document corners, and the other to detect the a corner point in an image. To prepare data for the first model, run:

python DocumentDataGenerator --d ../path_to_store_frames/ --o ../path_to_train_setand for the second model, run:

python CornerDataGenerator --d ../path_to_store_frames/ --o ../path_to_corner_train_setYou can also download a version of this data in the right format from here:

Baidu pan Code:zex0

Google Drive

Now we can use the data to train our models. To train the document detector (The model that detects 4 corners), run:

python documentDetectorTrainer.py --i path_to_train_set/and to train the corner detector, run:

python cornerTrainer.py --i path_to_corner_train_set/ --o path_to_checkpoints/Email : [email protected] in-case of any queries.

To those working on this problem, I would encourage trying out fully connected neural networks (Or some variant of pixel level segmentation network) as well; in my limited experiments, they are able to out-perform my method quite easily, and are more robust to unseen backgrounds (Probably because they are able to utilize context information of the whole page when making the prediction). They do tend to be a bit slower and require more memory though (Because a high-res image is used as input.)