This repository brings a implementation of a complete video processing workflow which takes advantage of the following Azure resources to perform ingestion, encoding:

-

Azure Media Services (AMS): Azure Media Services is a cloud-based media workflow platform that enables you to build solutions that require encoding, packaging, content-protection, and live event broadcasting. Click here to know more.

-

Azure Video Indexer (AVI): Video Indexer consolidates various audio and video artificial intelligence (AI) technologies offered by Microsoft in one integrated service, making development simpler. Click here to know more.

-

Azure Durable Functions (ADF): Durable Functions is an extension of Azure Functions that lets you write stateful functions in a serverless compute environment. Click here to know more.

-

Azure Logic Apps (ALA): Azure Logic Apps is a cloud service that helps you schedule, automate, and orchestrate tasks, business processes, and workflows when you need to integrate apps, data, systems, and services across enterprises or organizations. Click here to know more.

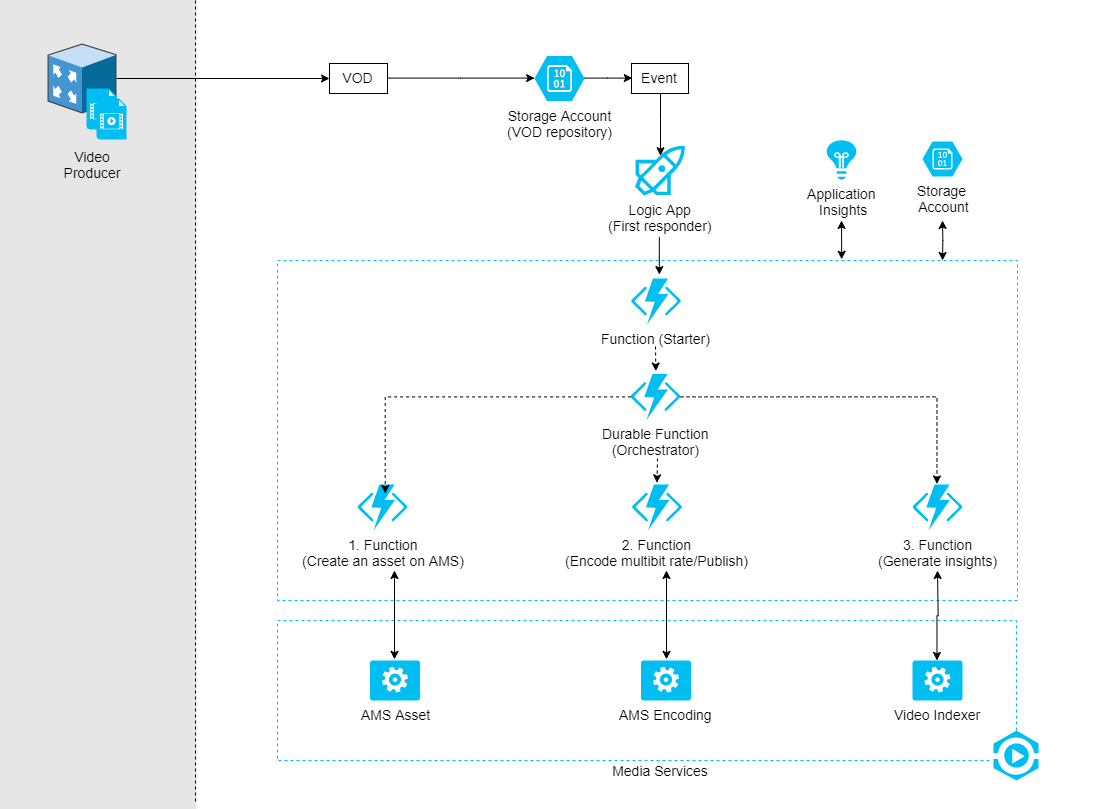

The proposed architecture can be seen below. In summary, this is what happens when a new video arrives into a specific container (here suggested as "incoming-videos") within Azure an given Azure Storage Account:

-

A new event is triggered by the storage account and is captured by a Logic App.

-

Logic App then capture the information previously sent and calls out a new function that validates the information received and then, starts a new stateful video processing flow by calling a orchestration function under ADF.

-

Under-the-hood ADF does call action functions whereby both the ingestion, encoding, publishing and analytics routines are performed. All these action functions actually do is to call for specific routines provided by AMS and wait for its responses.

Considering you already have both .NET 2.2 (+) and the lastest version of Azure SDK installed in your computer, all you have to do towards to get it operational, is described below.

This ain't a requirement but you can make this process considerable easier if you take advantage of Visual Studio 2017 (+) tooling.

Each of these services in Azure relies on storage accounts so the first step here would be going after the process of creating it. Please, follow this link to see a tutorial on how to get there.

After have it created, add a new private container called "incoming-videos".

Create a new Azure Media Services Account (there is a very nice tutorial in here on how to do it). Don't forget to tie up the storage account just created with this AMS account.

After its creation, don't forget to enable the "Streaming Endpoint" for the account or you wouldn't be able to see the actual result of the flow: videos being played.

As mentioned before, the solution utilizes a logic app to actually listen the events occurring within the storage account and react to those. So, you need to go after it. In here you will be able to see a very nice tutorial taughing how to do it.

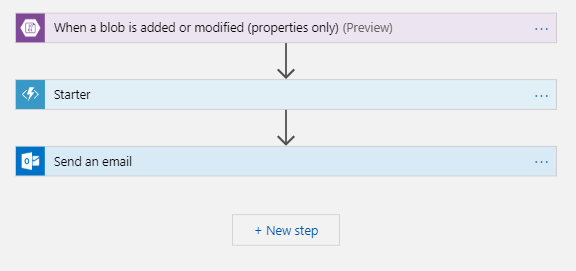

At the end, your Logic App flow should look like that one presented by the Figure below.

Where:

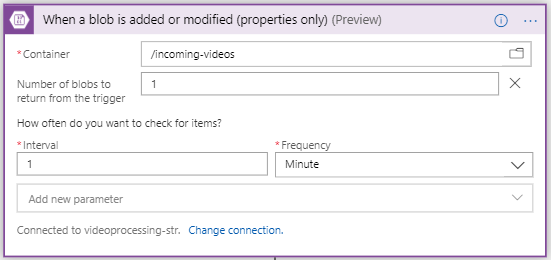

- The first action defines both a connection with the storage account and determines the container which events will be listen from. Please, see the Figure below.

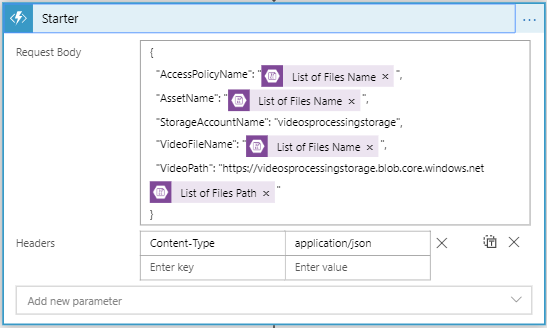

- The second action calls a regular Azure Function passing some dynamic information on the body, as you can see through the image below.

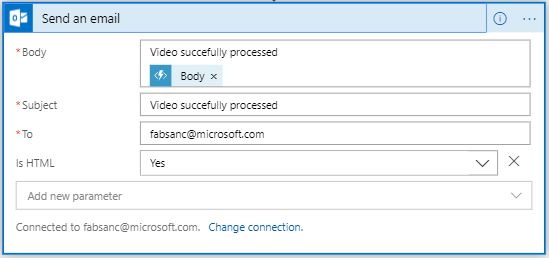

- At the end, the Logic App then collects the results retuned by the orchestration function, and finally, drops an email to the configured recipient notifying it about the conclusion of the process.

Actually, there are several ways by which you could publish this code out into Azure like, setting up continous integration over Azure DevOps, FTP, so on and so so forth. For test purposes tough, the easiest way to get there is to web deploy protocol over Visual Studio. This is what I'm actually doing to get it done here.

NOTE: Because the entire processing flow can take several minutes to complete and also, considering that Functions running under Consumption Plan are limited to 10 minutes max before it returns time-out, I strongly recommend you deploy it into a Function running under a regular hosting plan. In here you can learn more about this constraint.

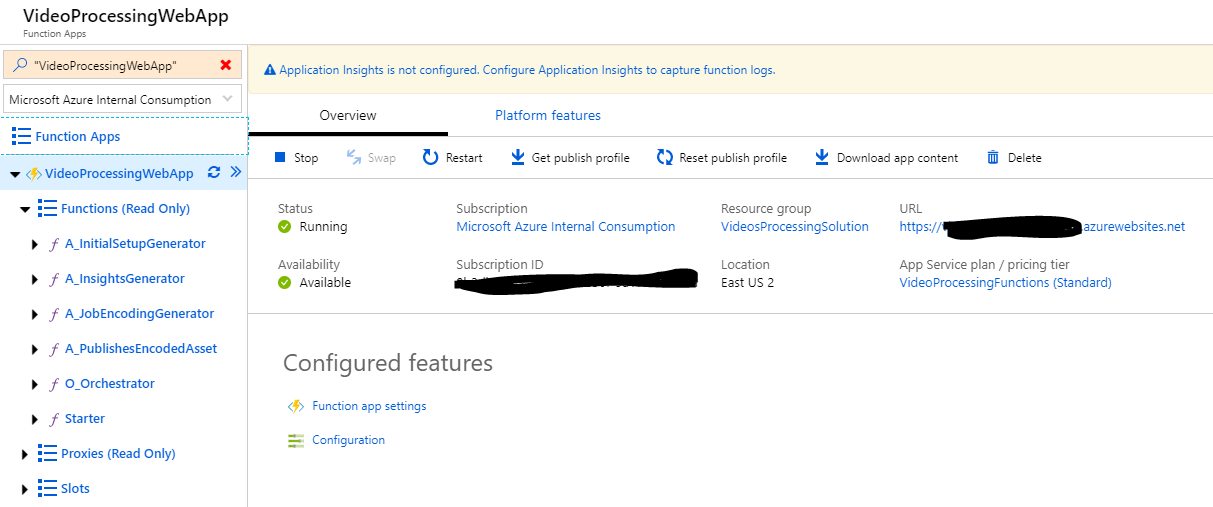

At the end, you should have a Function App pretty similar to that one presented by the Figure below sitting on top of your Azure environment.

At this point, Azure Media Services and the new version of Video Indexer are two separate services that can work together. It means that you can use Video Indexer features within your existing AMS account to get the job done.

Because we're interested on having the insights extraction as result of the video processing flow, I'm going to bring it together and for this, I need to connect my Video Indexer account with my AMS account. The procedure to get it done is well detailed in here.

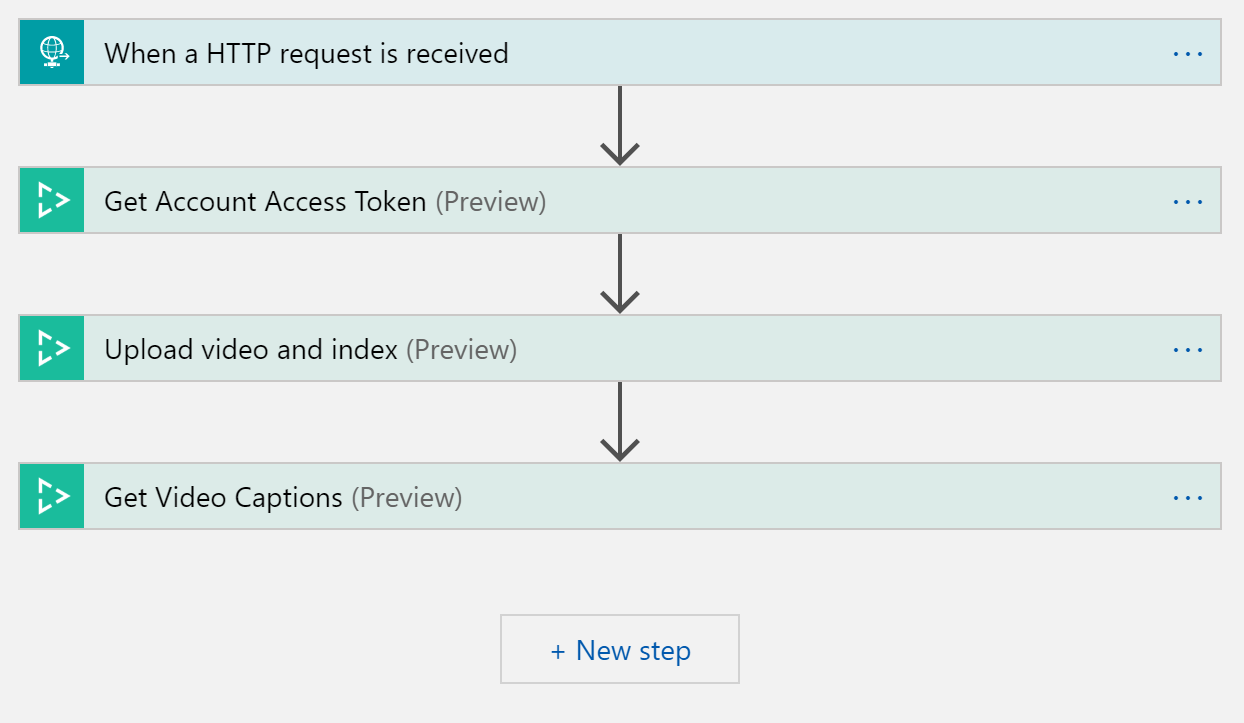

Finally, we're taking advantage of some pre-built actions within the Logic Apps service to help us with the "insights extraction" part.

Please, go through the process of creating a new Logic App and then, add the following steps with the proper configuration within it (as show by the Figures below).

The general flow would is:

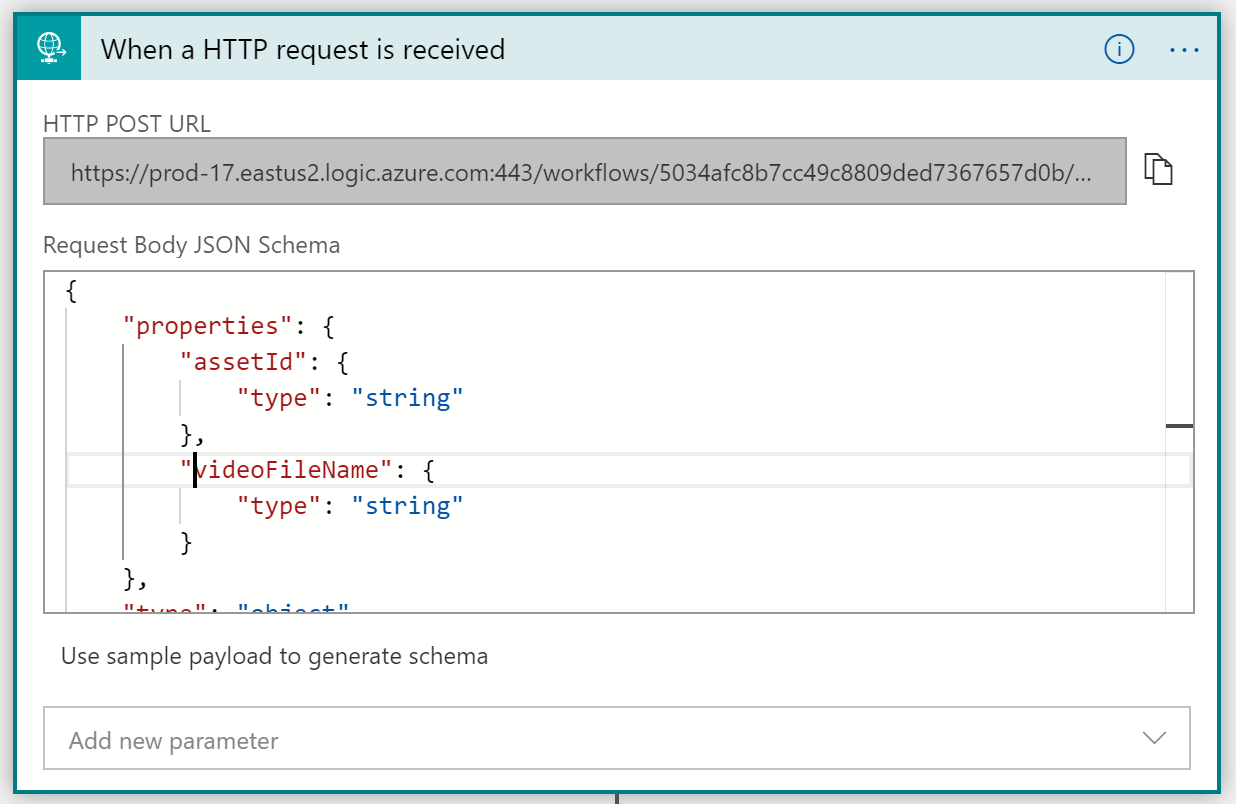

- When the activity function called "A_PublishesEncodedAsset" finishes its job, it does call the logic app you just created. The request itself has to bring the two info in the body: "assetId" and "videoFileName". VI will use this two info to uniquely identify the video which insights will be extracted from.

The payload template expected by the HTTP Request Action is that one presented below.

{

"properties": {

"assetId": {

"type": "string"

},

"videoFileName": {

"type": "string"

}

},

"type": "object"

}

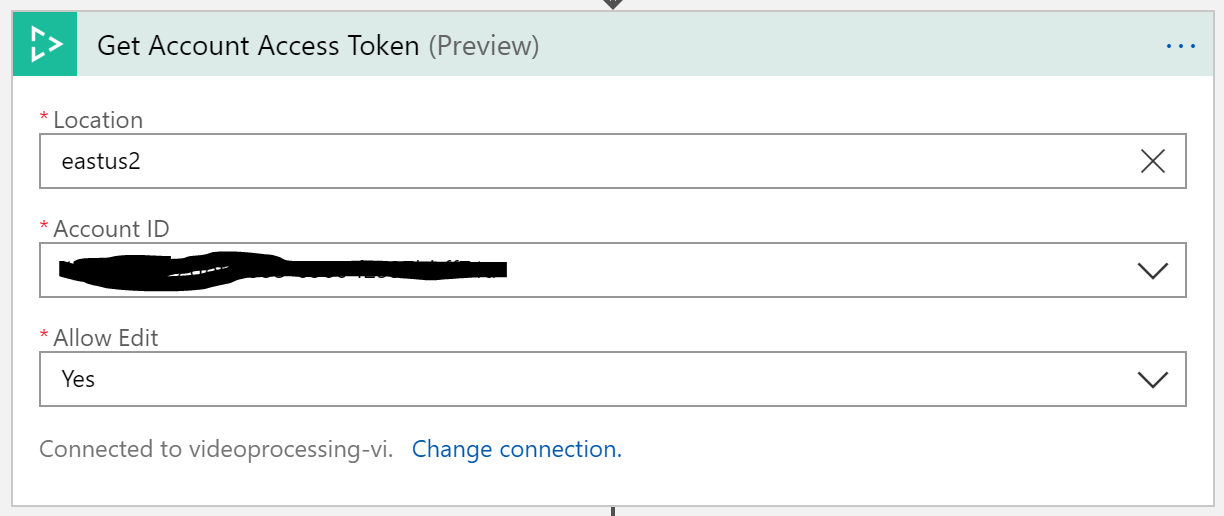

- As outcome of the HTTP Request, a call to "VI Access Token" task is sent. This action will get the authorization needed for us to order the service extract the insights on our behalf.

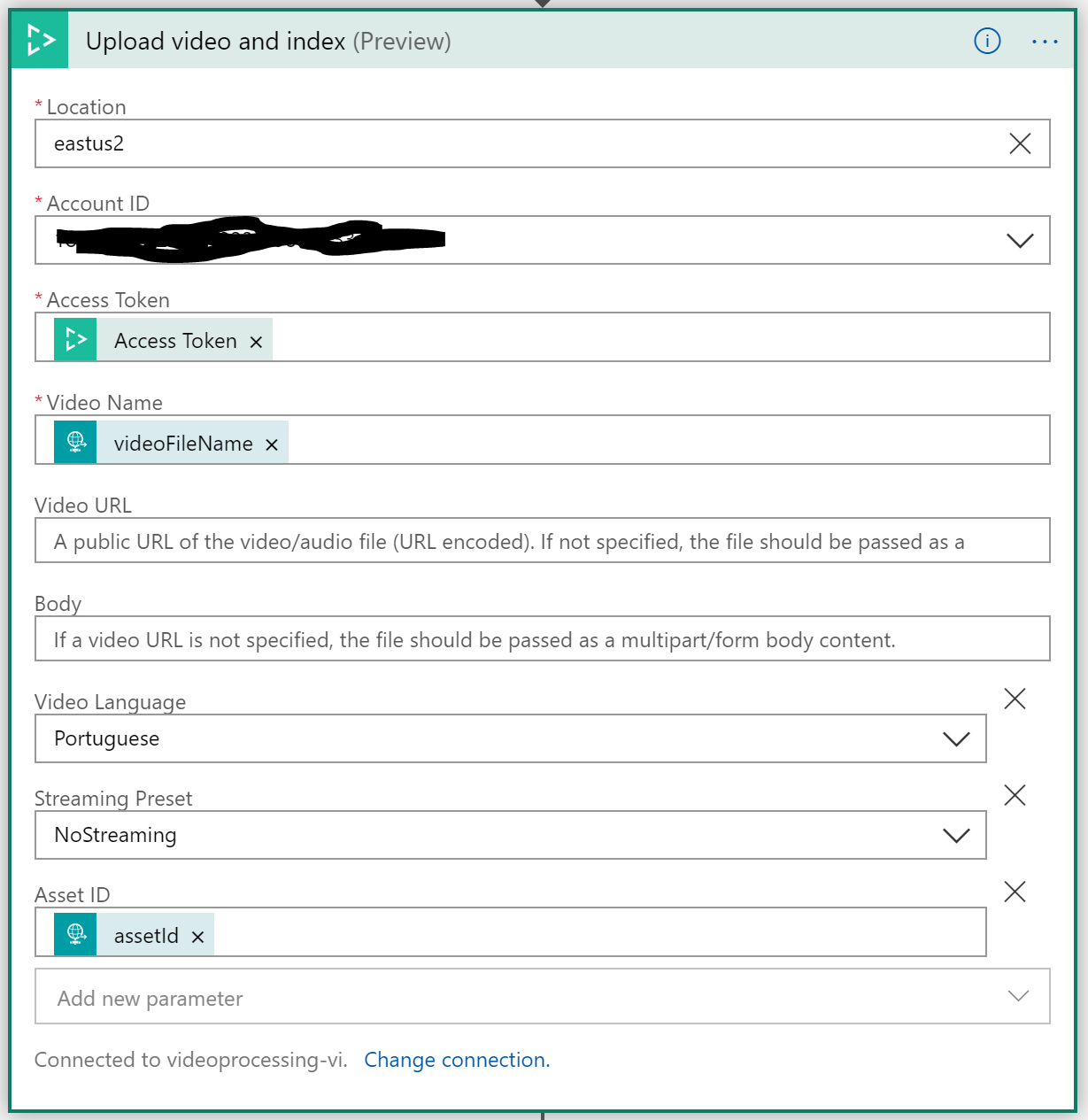

- Then, we call the specific routine that has the responsability of performing the extraction itself. Please, note that we're passing the dynamic data received by the "HTTP Request" task and also, the access token we've got on the previous step.

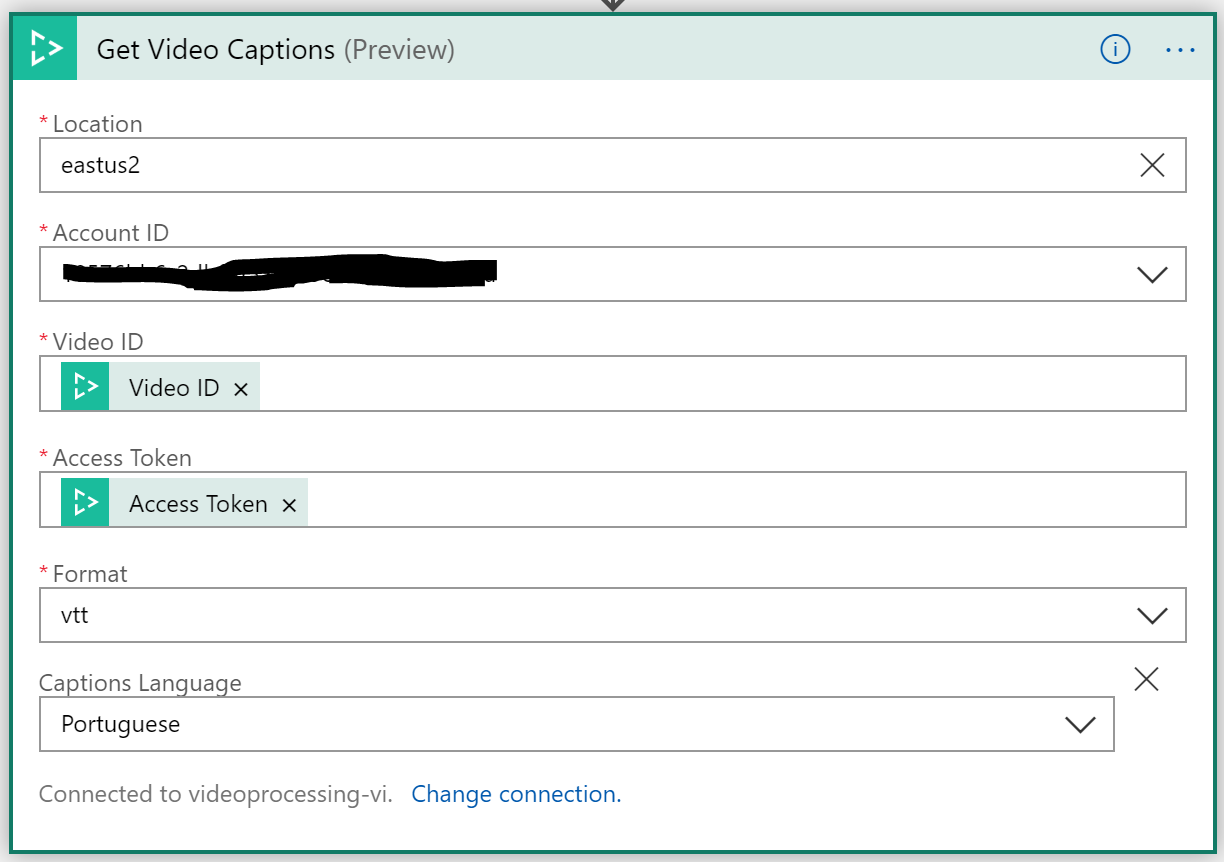

- The final step refers specifically to a feature which allows us to get specifically video's transcription/caption data.