https://github.com/timzhang642/3D-Machine-Learning

https://github.com/Dok11/nn-dldm

https://medium.com/@omarbarakat1995/depth-estimation-with-deep-neural-networks-part-1-5fa6d2237d0d https://medium.com/datadriveninvestor/depth-estimation-with-deep-neural-networks-part-2-81ee374888eb https://github.com/MahmoudSelmy/DeeperDepthEstimation https://github.com/MahmoudSelmy/DepthEstimationVGG/blob/master/README.md

https://towardsdatascience.com/depth-estimation-on-camera-images-using-densenets-ac454caa893

https://github.com/priya-dwivedi/Deep-Learning/tree/master/depth_estimation

https://arxiv.org/abs/1812.11941 https://github.com/ialhashim/DenseDepth

https://www.doc.ic.ac.uk/~ajd/Publications/McCormac-J-2019-PhD-Thesis.pdf

Repository for 3D-LMNet: Latent Embedding Matching for Accurate and Diverse 3D Point Cloud Reconstruction from a Single Image [BMVC 2018]

https://github.com/val-iisc/3d-lmnet

3D-LMNet is a latent embedding matching approach for 3D point cloud reconstruction from a single image. To better incorporate the data prior and generate meaningful reconstructions, we first train a 3D point cloud auto-encoder and then learn a mapping from the 2D image to the corresponding learnt embedding. For a given image, there may exist multiple plausible 3D reconstructions depending on the object view. To tackle the issue of uncertainty in the reconstruction, we predict multiple reconstructions that are consistent with the input view, by learning a probablistic latent space using a view-specific ‘diversity loss’. We show that learning a good latent space of 3D objects is essential for the task of single-view 3D reconstruction.

https://github.com/fangchangma/sparse-to-dense.pytorch

This repo implements the training and testing of deep regression neural networks for "Sparse-to-Dense: Depth Prediction from Sparse Depth Samples and a Single Image" by Fangchang Ma and Sertac Karaman at MIT. A video demonstration is available on YouTube.

https://www.digitalproduction.com/2019/05/27/google-deep-learning-depth-prediction/

https://github.com/vcg-uvic/learned-correspondence-release

Learning to Find Good Correspondences (CVPR 2018) This repository is a reference implementation for K. Yi*, E. Trulls*, Y. Ono, V. Lepetit, M. Salzmann, and P. Fua, "Learning to Find Good Correspondences", CVPR 2018 (* equal contributions). If you use this code in your research, please cite the paper.

https://github.com/phuang17/DeepMVS

DeepMVS is a Deep Convolutional Neural Network which learns to estimate pixel-wise disparity maps from a sequence of an arbitrary number of unordered images with the camera poses already known or estimated.

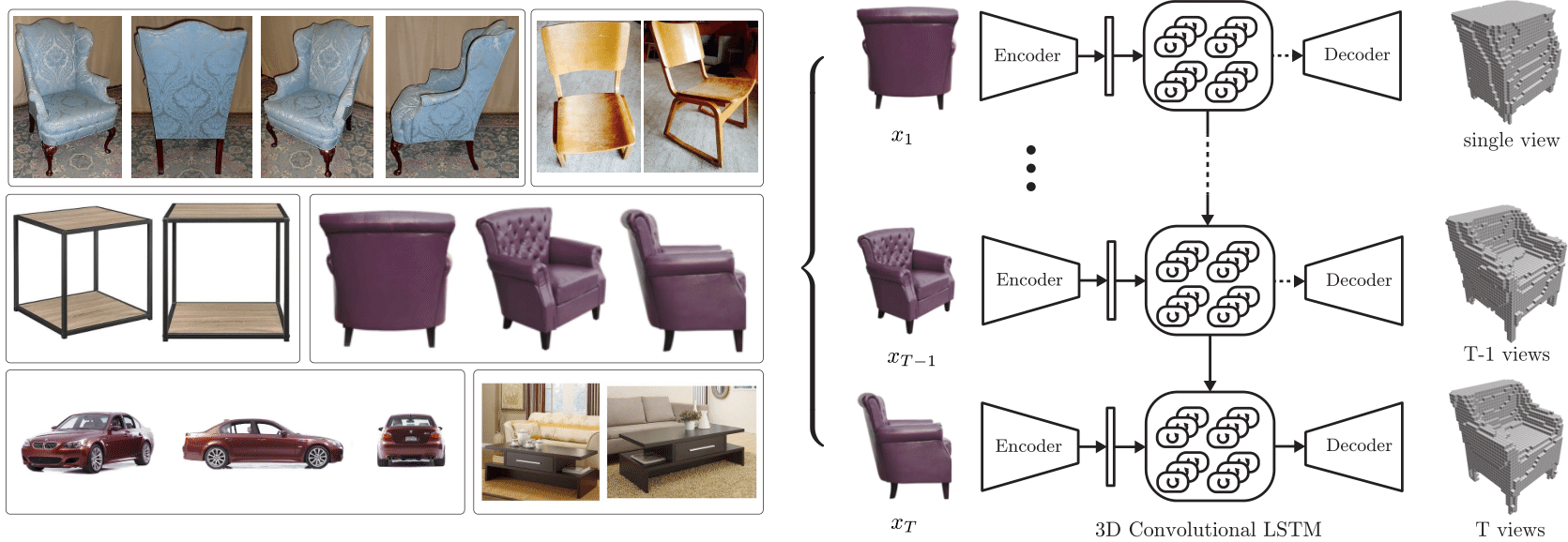

https://github.com/micmelesse/3D-reconstruction-with-Neural-Networks

3D reconstruction with neural networks using Tensorflow. See link for Video (https://www.youtube.com/watch?v=iI6ZMST8Ri0)

https://github.com/angeladai/ScanComplete

https://github.com/jgwak/McRecon

http://campar.in.tum.de/Chair/ProjectDepthPrediction

https://github.com/yihui-he/Estimated-Depth-Map-Helps-Image-Classification

http://vladlen.info/papers/deep-fundamental.pdf

https://ai.googleblog.com/2019/05/moving-camera-moving-people-deep.html

https://github.com/dontLoveBugs/FCRN_pytorch https://github.com/timctho/VNect-tensorflow https://github.com/fangchangma/sparse-to-dense https://github.com/OniroAI/MonoDepth-PyTorch https://github.com/Huangying-Zhan/Depth-VO-Feat https://github.com/gautam678/Pix2Depth https://github.com/lmb-freiburg/deeptam https://github.com/Yevkuzn/semodepth https://github.com/CVLAB-Unibo/Semantic-Mono-Depth https://github.com/JunjH/Visualizing-CNNs-for-monocular-depth-estimation https://github.com/adahbingee/pais-mvs