When I try and train a tagger using the latest English data, I get a strange error from UDPipe,

$ cat *.conllu | udpipe --train --tokenize --tagger --parser=no english.udpipe

Loading training data: done.

Training the UDPipe model.

Training tokenizer with the following options: tokenize_url=1, allow_spaces=0, dimension=24

epochs=100, batch_size=50, learning_rate=0.0050, dropout=0.1000, early_stopping=0

Epoch 1, logprob: -6.1698e+04, training acc: 95.88%

Epoch 2, logprob: -1.7173e+04, training acc: 98.92%

Epoch 3, logprob: -1.4062e+04, training acc: 99.05%

Epoch 4, logprob: -1.2385e+04, training acc: 99.16%

Epoch 5, logprob: -1.1286e+04, training acc: 99.24%

Epoch 6, logprob: -1.1481e+04, training acc: 99.21%

Epoch 7, logprob: -1.0287e+04, training acc: 99.30%

Epoch 8, logprob: -1.0279e+04, training acc: 99.29%

Epoch 9, logprob: -9.8852e+03, training acc: 99.32%

Epoch 10, logprob: -9.6343e+03, training acc: 99.34%

Epoch 11, logprob: -9.4930e+03, training acc: 99.35%

Epoch 12, logprob: -9.2315e+03, training acc: 99.37%

Epoch 13, logprob: -9.2220e+03, training acc: 99.38%

Epoch 14, logprob: -8.8526e+03, training acc: 99.39%

Epoch 15, logprob: -8.7573e+03, training acc: 99.40%

Epoch 16, logprob: -8.8190e+03, training acc: 99.41%

Epoch 17, logprob: -8.8209e+03, training acc: 99.39%

Epoch 18, logprob: -8.3526e+03, training acc: 99.42%

Epoch 19, logprob: -8.3097e+03, training acc: 99.43%

Epoch 20, logprob: -8.7686e+03, training acc: 99.41%

Epoch 21, logprob: -8.5230e+03, training acc: 99.42%

Epoch 22, logprob: -8.0554e+03, training acc: 99.44%

Epoch 23, logprob: -8.0775e+03, training acc: 99.45%

Epoch 24, logprob: -8.4924e+03, training acc: 99.42%

Epoch 25, logprob: -8.2039e+03, training acc: 99.45%

Epoch 26, logprob: -7.9598e+03, training acc: 99.46%

Epoch 27, logprob: -7.9808e+03, training acc: 99.46%

Epoch 28, logprob: -8.0371e+03, training acc: 99.46%

Epoch 29, logprob: -7.9295e+03, training acc: 99.47%

Epoch 30, logprob: -7.5110e+03, training acc: 99.47%

Epoch 31, logprob: -7.9097e+03, training acc: 99.47%

Epoch 32, logprob: -7.8456e+03, training acc: 99.48%

Epoch 33, logprob: -7.9043e+03, training acc: 99.46%

Epoch 34, logprob: -7.7426e+03, training acc: 99.48%

Epoch 35, logprob: -7.6989e+03, training acc: 99.47%

Epoch 36, logprob: -7.7118e+03, training acc: 99.47%

Epoch 37, logprob: -7.8382e+03, training acc: 99.47%

Epoch 38, logprob: -7.6632e+03, training acc: 99.47%

Epoch 39, logprob: -7.6765e+03, training acc: 99.49%

Epoch 40, logprob: -7.7373e+03, training acc: 99.48%

Epoch 41, logprob: -7.5058e+03, training acc: 99.50%

Epoch 42, logprob: -7.4203e+03, training acc: 99.50%

Epoch 43, logprob: -7.2875e+03, training acc: 99.51%

Epoch 44, logprob: -7.5939e+03, training acc: 99.48%

Epoch 45, logprob: -7.4016e+03, training acc: 99.50%

Epoch 46, logprob: -7.3488e+03, training acc: 99.51%

Epoch 47, logprob: -7.3759e+03, training acc: 99.49%

Epoch 48, logprob: -7.7003e+03, training acc: 99.49%

Epoch 49, logprob: -7.1461e+03, training acc: 99.51%

Epoch 50, logprob: -7.4844e+03, training acc: 99.48%

Epoch 51, logprob: -7.4017e+03, training acc: 99.50%

Epoch 52, logprob: -7.3334e+03, training acc: 99.49%

Epoch 53, logprob: -7.1444e+03, training acc: 99.50%

Epoch 54, logprob: -7.2387e+03, training acc: 99.51%

Epoch 55, logprob: -7.1217e+03, training acc: 99.51%

Epoch 56, logprob: -7.4385e+03, training acc: 99.51%

Epoch 57, logprob: -7.1386e+03, training acc: 99.50%

Epoch 58, logprob: -7.1672e+03, training acc: 99.50%

Epoch 59, logprob: -7.3106e+03, training acc: 99.52%

Epoch 60, logprob: -7.1694e+03, training acc: 99.50%

Epoch 61, logprob: -7.1212e+03, training acc: 99.52%

Epoch 62, logprob: -7.0805e+03, training acc: 99.52%

Epoch 63, logprob: -7.0900e+03, training acc: 99.51%

Epoch 64, logprob: -7.2829e+03, training acc: 99.50%

Epoch 65, logprob: -6.8592e+03, training acc: 99.52%

Epoch 66, logprob: -7.2357e+03, training acc: 99.51%

Epoch 67, logprob: -7.1893e+03, training acc: 99.51%

Epoch 68, logprob: -7.2612e+03, training acc: 99.51%

Epoch 69, logprob: -7.0492e+03, training acc: 99.52%

Epoch 70, logprob: -7.2061e+03, training acc: 99.50%

Epoch 71, logprob: -7.0483e+03, training acc: 99.52%

Epoch 72, logprob: -6.9997e+03, training acc: 99.52%

Epoch 73, logprob: -7.1702e+03, training acc: 99.51%

Epoch 74, logprob: -6.9724e+03, training acc: 99.52%

Epoch 75, logprob: -7.2270e+03, training acc: 99.50%

Epoch 76, logprob: -7.0296e+03, training acc: 99.51%

Epoch 77, logprob: -6.9355e+03, training acc: 99.53%

Epoch 78, logprob: -7.1586e+03, training acc: 99.51%

Epoch 79, logprob: -7.0209e+03, training acc: 99.53%

Epoch 80, logprob: -6.9683e+03, training acc: 99.52%

Epoch 81, logprob: -7.1498e+03, training acc: 99.52%

Epoch 82, logprob: -7.2023e+03, training acc: 99.52%

Epoch 83, logprob: -6.8345e+03, training acc: 99.53%

Epoch 84, logprob: -7.1528e+03, training acc: 99.51%

Epoch 85, logprob: -6.6544e+03, training acc: 99.54%

Epoch 86, logprob: -6.9870e+03, training acc: 99.52%

Epoch 87, logprob: -6.9638e+03, training acc: 99.51%

Epoch 88, logprob: -6.9834e+03, training acc: 99.53%

Epoch 89, logprob: -6.5750e+03, training acc: 99.56%

Epoch 90, logprob: -6.9301e+03, training acc: 99.52%

Epoch 91, logprob: -7.0809e+03, training acc: 99.52%

Epoch 92, logprob: -6.9539e+03, training acc: 99.52%

Epoch 93, logprob: -7.1273e+03, training acc: 99.52%

Epoch 94, logprob: -7.0223e+03, training acc: 99.51%

Epoch 95, logprob: -6.8614e+03, training acc: 99.53%

Epoch 96, logprob: -6.8142e+03, training acc: 99.54%

Epoch 97, logprob: -6.9596e+03, training acc: 99.52%

Epoch 98, logprob: -6.8749e+03, training acc: 99.53%

Epoch 99, logprob: -7.0501e+03, training acc: 99.51%

Epoch 100, logprob: -7.1078e+03, training acc: 99.52%

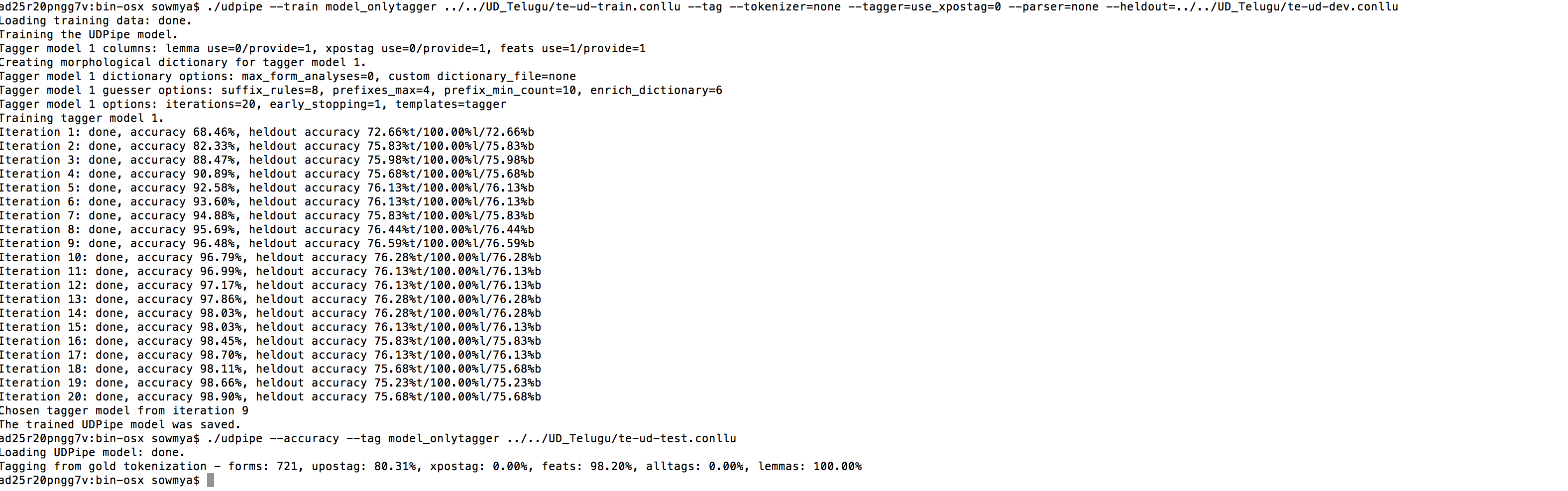

Tagger model 1 columns: lemma use=1/provide=1, xpostag use=1/provide=1, feats use=1/provide=1

Creating morphological dictionary for tagger model 1.

Tagger model 1 dictionary options: max_form_analyses=0, custom dictionary_file=none

Tagger model 1 guesser options: suffix_rules=8, prefixes_max=4, prefix_min_count=10, enrich_dictionary=6

An error occurred during model training: Should encode value 338 in one byte!