For this project, you will work with the Reacher environment.

In this environment, a double-jointed arm can move to target locations. A reward of +0.1 is provided for each step that the agent's hand is in the goal location. Thus, the goal of your agent is to maintain its position at the target location for as many time steps as possible.

The observation space consists of 33 variables corresponding to position, rotation, velocity, and angular velocities of the arm. Each action is a vector with four numbers, corresponding to torque applicable to two joints. Every entry in the action vector should be a number between -1 and 1.

To solve the environment we'll need to take into account the presence of many agents. In particular, the agents must get an average score of +30 (over 100 consecutive episodes, and over all agents). Specifically,

- After each episode, we add up the rewards that each agent received (without discounting), to get a score for each agent. This yields 20 (potentially different) scores. We then take the average of these 20 scores.

- This yields an average score for each episode (where the average is over all 20 agents).

The environment is considered solved, when the average (over 100 episodes) of those average scores is at least +30.

To set up your python environment to run the code in this repository, follow the instructions below.

-

Create (and activate) a new environment with Python 3.6.

- Linux or Mac:

conda create --name drlcc python=3.6 source activate drlcc- Windows:

conda create --name drlcc python=3.6 activate drlcc

-

Clone the repository (if you haven't already!), and install several dependencies.

git clone https://github.com/WillieMaddox/DRL_Continuous_Control.git cd DRL_Continuous_Control pip install .

-

Download the environment from one of the links below. You need only select the environment that matches your operating system:

- Linux: click here

- Mac OSX: click here

- Windows (32-bit): click here

- Windows (64-bit): click here

(For Windows users) Check out this link if you need help with determining if your computer is running a 32-bit version or 64-bit version of the Windows operating system.

(For AWS) If you'd like to train the agent on AWS (and have not enabled a virtual screen), then please use this link (version 1) or this link (version 2) to obtain the "headless" version of the environment. You will not be able to watch the agent without enabling a virtual screen, but you will be able to train the agent. (To watch the agent, you should follow the instructions to enable a virtual screen, and then download the environment for the Linux operating system above.)

Note: all the code in the

communicator_objectsandunityagentsfolders contains the necessary files needed to communicate with the Reacher simulator. I simply copied the required files from the ml-agents repo for convenience. -

Now place the file in your local repository and unzip (or decompress) the file. If you prefer not to copy the sim directly into your project, you can simply link to it.

ln -s "path/to/unzipped/Reacher/dir" .

Follow the instructions in Continuous_Control.ipynb to get started with training your own agent!

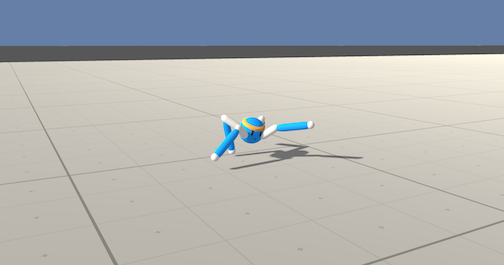

After you have successfully completed the project, you might like to solve the more difficult Crawler environment.

In this continuous control environment, the goal is to teach a creature with four legs to walk forward without falling.

You can read more about this environment in the ML-Agents GitHub here. To solve this harder task, you'll need to download a new Unity environment. (Note: Udacity students should not submit a project with this new environment.)

You need only select the environment that matches your operating system:

- Linux: click here

- Mac OSX: click here

- Windows (32-bit): click here

- Windows (64-bit): click here

Now place the file in your local repository and unzip (or decompress) the file. Next, open Crawler.ipynb and follow the instructions to learn how to use the Python API to control the agent.

(For AWS) If you'd like to train the agent on AWS (and have not enabled a virtual screen), then please use this link to obtain the "headless" version of the environment. You will not be able to watch the agent without enabling a virtual screen, but you will be able to train the agent. (To watch the agent, you should follow the instructions to enable a virtual screen, and then download the environment for the Linux operating system above.)