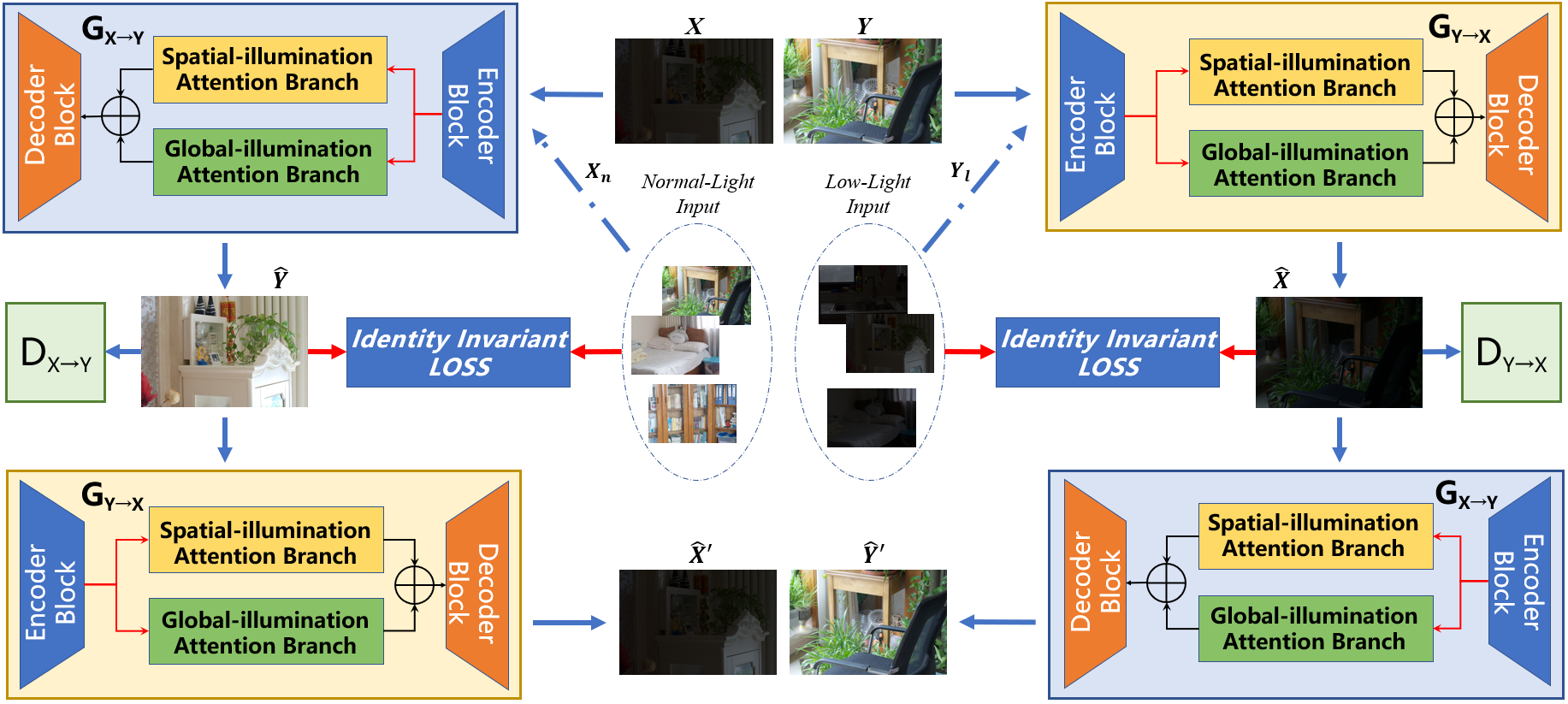

LE-GAN: Unsupervised Low-light Image Enhancement Network using Attention Module and Identity Invariant Loss

This is Paired Normal/Low-light Images (PNLI) dataset and Pytorch implementation of LE-GAN: Unsupervised Low-light Image Enhancement Network using Attention Module and Identity Invariant Loss in KBS(Knowledge-Based Systems), 2022, by Ying Fu, Yang Hong, Linwei Chen, Shaodi You.

The code is tested on Python 3.7, PyTorch 1.8.2, TorchVision 0.8.2, but lower versions are also likely to work.

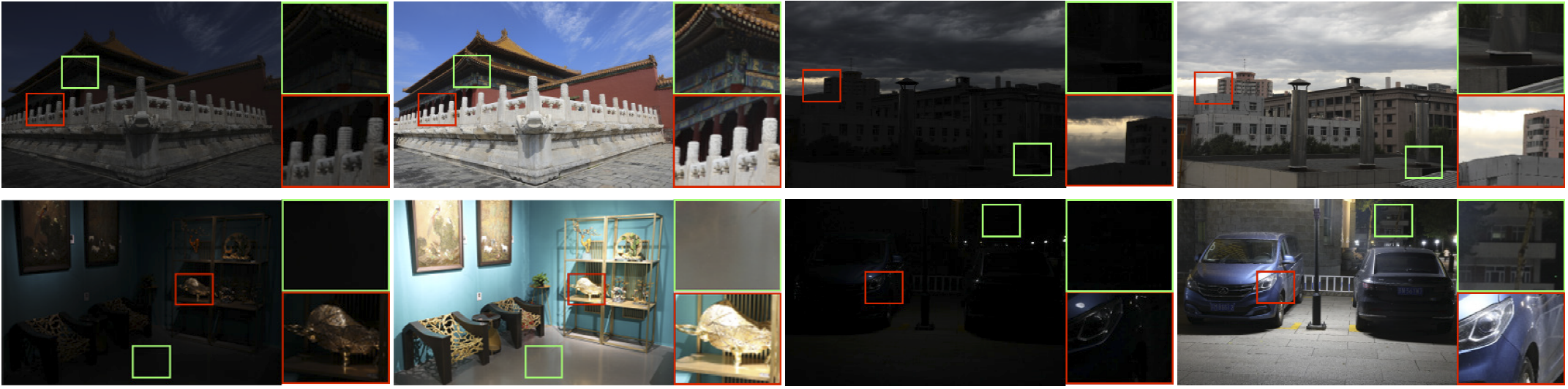

Images for PNLI

-

Normal-light data (Ground-truth). (Extraction Code: 2022)

-

Low-light data. (Extraction Code: 2022)

Tips:

-

We provide all files in [Baidu Drive], and the extraction code of all files is “2022”.

-

Note that each low-light image correspond to one normal-light image as Ground Truth.

-

We name all images with a purely numeric number, paired long/short-exposure images file names of the same format are corresponding(low-light image file name = normal light image file name+1). For example, for “1.JPG”, the file name of the corresponding low-light image is “2. JPG”.

If you use our dataset or code for research, please ensure that you cite our paper:

Ying Fu, Yang Hong, Linwei Chen, and Shaodi You, "LE-GAN: Unsupervised low-light image enhancement network using attention module and identity invariant loss", in Knowledge-Based Systems, 2022, 240: 108010.

@article{fu2022gan,

title={LE-GAN: Unsupervised low-light image enhancement network using attention module and identity invariant loss},

author={Fu, Ying and Hong, Yang and Chen, Linwei and You, Shaodi},

journal={Knowledge-Based Systems},

volume={240},

pages={108010},

year={2022},

publisher={Elsevier}

}

If you have any additional questions, please email to [email protected]