Official implementation of our WACV 2023 paper Proactive Deepfake Defence via Identity Watermarking for both training and evaluation.

The explosive progress of Deepfake techniques poses unprecedented privacy and security risks to our society by creating real-looking but fake visual content. The current Deepfake detection studies are still in their infancy because they mainly rely on capturing artifacts left by a Deepfake synthesis process as detection clues, which can be easily removed by various distortions (e.g. blurring) or advanced Deepfake techniques. In this paper, we propose a novel method that does not depend on identifying the artifacts but resorts to the mechanism of anti-counterfeit labels to protect face images from malicious Deepfake tampering. Specifically, we design a neural network with an encoder-decoder structure to embed watermarks as anti-Deepfake labels into the facial identity features. The injected label is entangled with the facial identity feature, so it will be sensitive to face swap translations (i.e., Deepfake) and robust to conventional image modifications (e.g., resize and compress). Therefore, we can identify whether watermarked images have been tampered with by Deepfake methods according to the label's existence. Experimental results demonstrate that our method can achieve average detection accuracy of more than 80%, which validates the proposed method's effectiveness in implementing Deepfake detection.

The proposed Face Identity Watermarking framework can be used to invisibly embed user-generated or pseudo-random sequences into target face images' identity feature to defence malicious Deepfake.

- Linux or macOS

- NVIDIA GPU + CUDA CuDNN (CPU may be possible with some modifications, but is not inherently supported)

- Python 3

Please download datasets and unzip images into a folder for training.

- We experiment on three datasets (you can choose one of them as the training set).

Please download pre-trained identity encoder models for training.

- Our framework requires pre-trained identity encoder networks in all actions (You can choose one of them from below, save its pre-trained model in the folder

saved_modelsand then set the corresponding argument in the common line:facenet_mode).

python scripts/training.py \

--facenet_mode=arcface \

--facenet_dir='./saved_models' \

--exp_dir= /directory/to/output \

--trainimg_dir= /directory/to/training images set \

--valimg_dir= /directory/to/validation images set

where

facenet_modeassigns the identity encoder's framework, which must be one of [ArcFace | CurricularFace], and the default is ArcFace.facenet_dirindicates the directory contains the pre-trained model of the identity encoder.exp_dircontains model snapshots, image snapshots, and log files.trainimg_dirandvalimg_dirpoint to the folders containing images for training and validation.

Our pre-trained models can be downloaded from here. The folder names indicate which data set the models are trained on. Please save the downloaded files into the folder pretrained_models.

| Model | Description |

|---|---|

| Identity Encoder | Pre-trained face recognition network extracts the input image's last feature vector generated before the final fully-connected layer as identity representation. |

| Attributes Encoder | U-Net style network uses the feature maps generated from the U-net decoder parts to represent the input face images' attributes. |

| AAD Generator | The image reconstruction network adopts multiply cascaded AAD Residual Blocks (ResBlk) to integrate the identity and attributes. |

| Multi-Scale Discriminator | Network taken from phillipi for adversarial training. |

Here we show some samples of Injection results.

Comparison between watermarked and non-watermarked images' Deepfake results

Watermarked images using different sequences.

python scripts/injection.py \

--rand_select=Yes \

--max_num=1000 \

--facenet_mode=arcface \

--facenet_dir='./saved_models' \

--aadblocks_dir='./pretrained_models' \

--attencoder_dir='./pretrained_models' \

--seq_type=gold \

--exp_dir= /directory/to/output \

--img_dir=/directory/to/images \

where

rand_selectindicates whether randomly selecting images for injection.max_numindicates the maximum number of images to be selected.facenet_modeandfacenet_dirmust be consistent with pre-trained models, e.g., if you download ArcFace's pre-trained mode, you must assign thefacenet_modeasarcfaceand assign thefacenet_dirto corresponding pre-trained model.facenet_dirindicates the folder's directory containing the pre-trained identity encoder model.aadblocks_dirindicates the directory of the pre-trained AAD Generator model.attencoder_dirindicates the directory of the pre-trained Attributes Encoder model.seq_typeindicates the type of sequence you want to embed in images. Four options are available: [mls, gold, gaussian, laplace].exp_dircontains the injection results.img_dirpoints to cover images.

Please follow the introduction in the Injection section to download and save the required pre-trained models.

-

If you want to analyse the correlation results, run: analysis, e.g.,

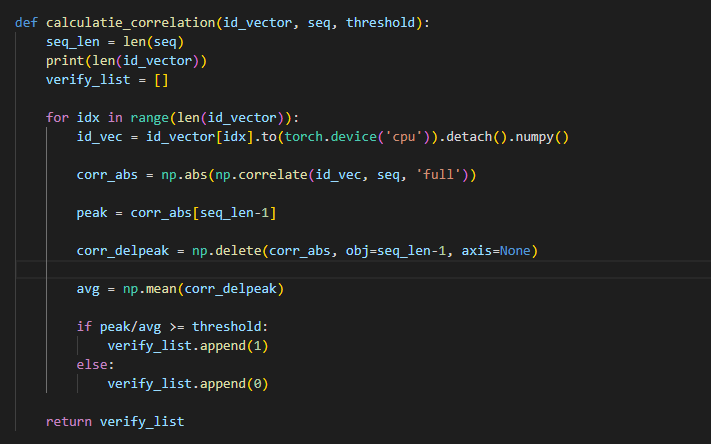

python scripts/analysis.py \ --perturbation=No \ --visual_correlation=Yes \ --peak_threshold=5 \ --seq_dir=/directory/to/saved sequence .txt file \ --img_dir=/directory/to/images \ --facenet_mode=arcface \ --facenet_dir='./pretrained_models'where

perturbationwhether apply perturbation on input images to implement robustness test.visual_correlationindicates whether visualize the correlation curve.peak_thresholdindicates the decision threshold to determine whether the image contains a watermark.seq_dirpoints to the folder that contains the sequence .txt file.img_dircontains images to be analyzed.

-

If you want to conduct Deepfake detection among Deepfaked and authentic images, run evaluation, e.g.,

python scripts/evaluation.py \ --peak_threshold=5 \ --seq_dir=/directory/to/saved sequence .txt file \ --facenet_mode=arcface \ --facenet_dir='./saved_models' \ --imgpos_dir=/directory/to/positive(real) images' \ --imgneg_dir=/directory/to/negative(fake) images'where

imgpos_dircontains the authentic images.imgneg_dircontains the Deepfake images.

-

If you want to detect the watermark in images, run: extraction, e.g.,

python scripts/extraction.py \ --peak_threshold=5 \ --perturbation=No \ --seq_dir=/directory/to/saved sequence .txt file \ --img_dir=/directory/to/images \ --facenet_mode=arcface \ --facenet_dir='./pretrained_models'where

peak_thresholdindicates the decision threshold to determine whether the image contains a watermark.perturbationindicates whether apply perturbation on input images to implement a robustness test.seq_dirpoints to the folder that contains the sequence .txt file.img_dircontains images to be detected whether they contain a watermark.

If you think this code is useful or employ it in your research, please cite our paper:

@inproceedings{zhao2023proactive,

title={Proactive Deepfake Defence via Identity Watermarking},

author={Zhao, Yuan and Liu, Bo and Ding, Ming and Liu, Baoping and Zhu, Tianqing and Yu, Xin},

booktitle={Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision},

pages={4602--4611},

year={2023}

}